Even if you don’t know much about SEO, the fact that we’re already at episode #144 of this podcast should be a pretty good indication that there’s a lot to cover. If you’re already familiar with the subject, you know that technical SEO can get pretty hairy.

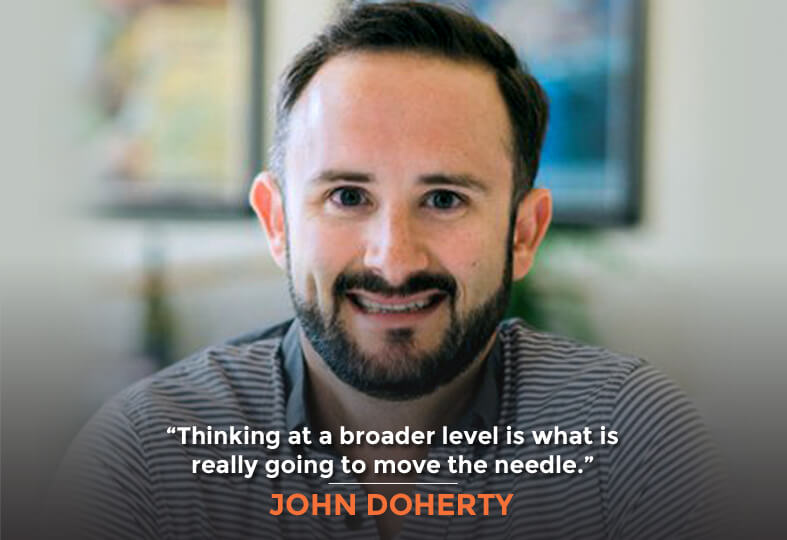

Here to explore this fascinating complexity with me is John Doherty, the founder of Credo, which makes it easy to find a great SEO or marketer. All of this complexity multiplies when you’re dealing with a huge website of a million pages or more. While we usually deal with smaller websites, this episode will dive deep into technical SEO for large websites.

In this Episode

- [01:10] – John starts things off by talking about enterprise SEO, and what’s different between regular SEO and enterprise SEO.

- [04:27] – We hear about whether the majority of the pages that John has been talking about are unique and useful, or just repeat copies of similar pages.

- [06:03] – How would John tackle an issue of having multiple low-value pages or duplicate content pages in Google’s index?

- [09:52] – John talks about and clarifies the increased growth he mentioned a moment earlier.

- [10:46] – Stephan takes a moment to arrange the strategies and methods that John has been talking about into a hierarchy.

- [13:27] – We hear some of John’s additions to what Stephan has been saying.

- [15:50] – The challenge that you’re dealing with on large websites is crawl optimization, John explains. He and Stephan then talk about crawl budget and index budget.

- [20:45] – Google used to say that 301 redirects were the only ones that pass pagerank, but Gary Illyes said otherwise, Stephan points out.

- [25:12] – John responds to what Stephan has been saying about links and redirects, and the involved side effects.

- [28:44] – We hear about crawl budget versus index budget, with Stephan explaining what they are.

- [33:39] – We move on from canonicals and 301s to a fun yet important topic: link building. How do you do link building for a large-scale website with millions of pages?

- [37:57] – John talks about the challenge with many metrics.

- [40:55] – Stephan talks about a piece of content explaining the benefits of Link Explorer.

- [42:40] – How many tools does John typically use? His answer includes a list of some of his preferred tools.

- [47:51] – What would John tell a client who wants to rank for a specific keyword for a specific page?

- [49:57] – We hear about what John does to make something a link-worthy page.

- [52:35] – Stephan shares something that he didn’t love about an SEO choice that REI made against his advice.

- [54:30] – John shares his suggestions on what a listener should do if they’re looking for the right agency or consultant to hire at the right price.

- [57:26] – Is there a particular price range that a typical agency will fall within?

- [59:35] – John lists some ways that listeners can get in touch with him.

Transcript

Technical SEO can get pretty hairy. Now, imagine the complexity gets multiplied significantly when you’re dealing with a large scale website. I’m talking about something that is a million plus pages. This episode, we’re going to dive into technical SEO specifically for large websites. Our guest in this episode, number 144 is John Doherty. He’s the founder of getcredo.com, he’s helped over 1500 businesses find a quality digital marketing provider to work with, and he’s personally consulted on SEO on some of the largest websites online. John, it’s great to have you on the show.

Stephan, my pleasure to be here.

Alright. Let’s talk about enterprise SEO because I know that’s a topic that’s near and dear to your heart and mine. What’s different, first of all, between regular SEO and enterprise SEO?

I think the thing that’s different about enterprise versus regular SEO is really the scale at which you have to think and it which you have to operate. In regular SEO, when you’re working on a smaller website, say it’s a 10-page business website, you’re concerned about very specific keywords. There are these four keywords that are really going to drive traffic to your business, drive you leads, and you’re going to be able to grow your business from there through organic channels. On the enterprise side, I very rarely think about this specific keyword and how we get this specific keyword ranking better. Think about buckets. For example, when I worked in apartment rentals, it wasn’t just how we’re ranking for San Francisco apartments for rent but it was how are we ranking on average across our top 100 apartment for rent keywords, across our top markets. I think that’s the biggest shift that I’ve always have to take into account where you’re just thinking at a broader level and those are the things that are really going to move the needle for you. Then you don’t have to really think about this small website either because you can link to all 10 group pages from your home page. But when you have literally millions of pages in the index, literally millions of pages that could drive you traffic, you really have to think about how are we linking to those, how are we structuring our website, what’s our information architecture there, and then finally the amount of work that has to happen in the background to keep your XML sitemaps up-to-date. Once again, it just happens at a much larger scale and there’s a lot more that can go wrong especially when you have 100 developers, for example, working on a website. There’s some different things that we have to take into account there such as monitoring and that sort of thing.

When you’re working on these different enterprise SEO projects, were you client-side or were you agency-side?

It’s been both and also as a freelance strategy consultant. I worked for Distilled in New York City, I worked on some very large websites, some constant websites that have been hit by Panda, for example, back in 2011, 2012, and some other large websites there, some jobs boards nd such. Then I worked in-house for Zillow Group, zillow.com is their main brand, but then also Trulia, Trulia Rentals, hotpads.com is a rentals website they owned. I worked in-house leading marketing and growth including SEO for those for about two years, and then I’ve been on my own since about September, October 2015. In that time, I worked with a lot of very large marketplaces and content websites as well. I probably worked with, I don’t know, 15-20 different websites with at least a million pages in Google’s index, some of them in the mid-eight figures, so 20, 30 million pages.

That’s a pretty big catalogue of pages. Are these pages unique, valuable pages or is a lot of it kind of slicing and dicing, and the same articles or product pages and so forth—you end up with lots and lots of tag pages potentially if you have a lot of tags on a WordPress site or if you have faceted navigation on any commerce site you might end up with more facet pages, price, brand, color, size breakdowns and so forth, than pages than actual SKUs in your product catalogue? What do you think is the majority of these pages and your typical engagement?

That’s a great question. Normally, these are actually useful pages, so it’s a lot of SKUs. In marketplaces, it’s people listing their products on this platform in order to sell them to the consumer audience that the website it acquiring. I’ve definitely come across those issues with filtering versus faceting with filters so whether you have a dedicated page because this search term has search volume or is this just causing a bunch of duplicate content? I’ve also come across a lot of startup marketplaces these days that have a lot of, as you mentioned, tag pages as well. These are basically free-form text field or people, when they’re listing their products on the marketplace, can basically add any sort of tag that they want. They could add in shoe, shoes, yellow shoes, red shoes, and their product is tagged with all of these, and then they create tag pages for all of these as well. I’ve done a lot of cleanup in that area. Also, some of them to the tune of seven figures of these tag pages or over a million of these tag pages and really narrowing them down to what are the pages that are going to drive traffic, what are the duplicates, and then we tackle it in a couple of different ways as well. These are pretty specific issues to these very large websites but it can make a world of difference.

How would you tackle an issue of having, let’s say a million tag pages or just generally more low-value pages or duplicate content pages in Google’s index? Are you going to deindex these pages? Somehow, you are going to 404 them? 301 them? Use canonical tags? What are you going to do?

Totally. It’s a whole combination of that. The ideal fix in my mind is first you go into Google Analytics or wherever it is that you’re storing your data. A lot of startups these days aren’t willing to pay for Google Analytics Premium, so they end up with a lot of sample data. They’re pulling the data out of Google Analytics specifically and putting it into some data warehouse like Redshift, and then you basically go and you query it directly using something like Hive or a bunch of other basically a place where you can write direct sequel to pull the information out of there. A lot of my clients, Stephan, have data scientist teams, have data teams, and analytics teams, so basically what I tell them is, “Okay, I need to understand which of these pages are actually getting traffic?” And then we figure out, “Okay, with those pages that are getting traffic, what keywords are they ranking for?” Obviously, they’re ranking for keywords that have volume and then basically we go, “How do we take these into account, a: get them better link to, b: make them higher quality, and c: hopefully rank them better, because now if you have previous example of, you have one for shoe, one for shoes, how can we bring that page together?” Then, also looking at the website, they often don’t have any sense of an information architecture. They’ll have their home page, and they’ll have, let’s say 5 categories and maybe 8–10 subcategories. That’s a: not going to get all your products index well. It’s also not going to get them ranking well, even if they are indexed, and you’re also are literally leaving money on the table because you should have, obviously in the longer tail, there’s less volume per specific keyword but in aggregate there’s a ton of search volume there and that traffic tends to convert a lot better. What I’ll do is actually take that information from what tag pages do we have, what keywords is the section of the site ranking for, and then how do we build out a full information architecture underneath categories, subcategories, sub-subcategories. Often I’ll take their number of categories and subcategories from 6, 8, 10, 12 up to at least in the hundreds, if not in the thousands, simply to have all those 3-4 clicks from the home page. What we’ll do is then take those specific pages, for example the shoe and shoes pages, read through, and redirect those to the new category page. This is often what we do. Sometimes, we have to start a bit cleaner so we do so I start work with the data science team, and basically what we do is called stemming. We look at the beginning of what’s the base word that all these terms are based off, so shoe, shoes, yellow shoes, that sort of thing. Shoe is the base term, and then we basically decide, yellow shoes is individual, red shoes is individual. If there are those modifiers on there, then we don’t canonical it back to the base page. I did that on a client a couple of years ago, where basically we clamped their whole tag section and did a lot of canonicals back to their base tag pages because they weren’t ready to basically invest in building out their full information architecture. We saw about a 25% uplift to that section of the site because we’re basically telling the search engines that, “All of these are basically duplicates and this is the page that we want rankin,” so we saw a lot better traffic across the board. I will say, though, that when clients and companies have been willing to go and build up that information architecture, or just test it with one specific category, we’ve seen insane growth. That same client, when they weren’t ready to invest in building up the full information architecture, what we did was we tested on one of their main categories and we basically found all the main keywords that were ranking within the tag section, built out a more robust category, subcategory, sub-sub category information architecture and I believe in the last two years now we’ve seen about a 350% growth across this bucket of keywords for them and just driven exponentially more revenue as well.

When you say 350% more growth, is that in organic search traffic? Is that in organic delivered sales, or in keyword placement or what?

That’s organic search traffic. I don’t know the number of keywords that are ranking in that section off the top of my head. I do like to talk about conversion and how much revenue this increased traffic has mapped to. That does become challenging on the enterprise level because it’s not just an SEO team and one web team working on the website but you have new products going out, you have different optimizations taking place, you might have a whole backend engineering team that’s meeting up the site which maps the better rankings, and better conversions, and all that. They’re checking their checkout flows. I don’t like to take credit for that but I can say, “Hey we drove X percentage more traffic here, across this bucket of keywords, and looking at Google Analytics that means that you’ve earned X amount more revenue,” with the caveats that all these other things are happening as well.

If we were to arrange these in a hierarchy, what would be the most ideal and the least ideal? The most ideal would be the 301 to a fully blown-out information architecture, categories, subcategories, sub-subcategories, and to completely eliminate the tag pages, replacing them with 301 redirects. Less ideal but still valuable would be if you don’t have a full information architecture blowout, you haven’t invested in the taxonomy development and everything, and you just need to eliminate all these low-value tag pages because Google frankly, hates tag pages. Then to have canonical tags on all of those tag pages that point to the closest category pages, that are not necessarily very many of these, but it would probably eliminate a lot of the tag pages from the index. Although canonical tags, canonical link elements are only hints as far as Google is concerned. I’ve seen so many times Google just ignores canonical tags even if they are correctly formatted and they make sense. The opposite of ignoring them basically is taking what would obviously superfluous tracking parameters and adding those URLs to the index like UTM source, UTM medium. Google should be pretty clear that those are going to create duplicate content. It is, after all, Google Analytics’ tracking tags. It’s their own product. They should know better and yet there are so many of those pages across so many websites ending up in Google. That would be the not quite as ideal scenario and correct me if I’m wrong in any of this or that I’ve misquoted you. The least valuable approach would be just to leave the tag pages alone or to just remove them all and leave 404 errors. Either of those approaches would be very suboptimal. Either you’re ending up with a lot of error pages that potentially are blackholing some inbound link equity or you are leaving a bunch of essentially spammy pages in Google’s index that shouldn’t be there.

Totally. I’ll add a couple of more things there, Stephan. One is also when you’re cleaning up these pages, the ideal fix is to never allow these freeform tag pages to form in the first place. If you’re setting up a new website or you’re moving over to a new domain or something like that, don’t even allow that. The second one is taking into account–it varies by website and how much traffic these tags, search pages sometimes they are getting, how much traffic they’re getting, and also how many sales that maps to. I’ve definitely seen sites where these tag pages are making up 20%, 25% of organic traffic. That’s mostly because they have a ton of them and a lot of them are getting one or two visits, but when you have millions of them and they’re getting one or two visits, they’re going to get a lot of traffic. You can’t just go and no index all of them and block robots.txt and not expect your business to take a hit. You definitely have to be strategic about what pages are you going to build out under this category, subcategory, sub-sub category sort of information architecture and then how do you go about cleaning up these pages. There’s definitely a process that sometimes you have to go through. For example, doing the canonical fix I talked about, stemming off of using the data science team, canonicalizing all the variations that are plurals, misspellings, and that sort of stuff, canonicalizing all of those base pages, and then no indexing all of those permutations, eventually putting out your information architecture, and then 301 redirecting the base tag page that’s still getting traffic, and hopefully you’ve seen an increase in traffic doing just that. Then you redirect that page to a category page or a subcategory page that’s much easier to find, that’s much better optimized towards the terms that you’re trying to rank for. If you do this en masse, you can see a huge growth in your average ranking, your ranking across the board, across these buckets of keywords that can drive you traffic. You see an increase in ranking, you see an increase in traffic, and you see a big bump in revenue as well, which ultimately is what we’re going for.

Yeah, so I misquoted you a few minutes ago when I was talking about canonicalizing directly to category pages. You actually canonicalize to these base tag pages where you’ve done stemming and you found similar related tags. Essentially, you’re grouping related tags together and canonicalising a group to one of them.

Exactly. Really the challenge here, Stephan, is what you deal with on big websites is really crawl optimization. The search engine’s is maybe spending a ton of time on your tag pages when they’re just low quality and, as we talked about, they’re duplicate, they’re permutations off of other things, and they’re really not adding any value. The search engines are spending, let’s say 50% of their time on those but they’re not crawling your main category pages, your subcategory pages, your product pages, your skews as we often talk about them in ecommerce. If they’re not crawling those, then those aren’t ranking as well as they could. Canonicals, they might drop some URLs out of the index but if these URLs are still linked to internally, they’re still going to be crawled. Canonicalization doesn’t stop the search engines from crawling them. Same as the noindex. It doesn’t stop search engines from crawling them. The only way to stop them from being crawled is robots.txt, blocking them there if you can. Once again, if that’s 25% of your organic traffic and it’s driving you hundreds of thousands of dollars in revenue every month, you can’t just block that. It definitely depends on the site and the scale at which how many tag pages there are, how much traffic they’re driving, how much revenue they’re driving, and that informs the strategy that you take in order to really clean it up. It can be a process. I had it take as long as a year to really get all this cleaned up, but over time, you kind of see a bump in traffic and revenue, bump in traffic and revenue, bump in traffic and revenue, and then eventually once you fix it all then you’re making hopefully hand over fist more money.

Yup, hopefully. Let’s distinguish here between the crawl budget and the index budget, basically how this is going to cost you crawl budget, when Google bot is going in, grabbing all these pages, finding noindex meta tags, saying, “Okay, well we won’t include that page in the index,” finding 301 redirects, “Oh, okay,” so the link equity as she goes over here to this other URL, “Okay, no problem. We’ll index that one instead.” That’s all using a crawl budget and then as you said, to distinguish that from when you do a robots.txt disallow, you’re stopping Googlebot from crawling. But on the flip side, this then creates a problem that you may have wanted actually to drop pages out of Google’s index and the disallow directive only stops Googlebot from spidering those pages. Those pages stick around in Google. They keep showing up the search results.

Yeah, exactly and that’s a great point, Stephan, and one that I forgot to mention in that, if you wanted to take these pages out of the index, robots.txt is not the way to do it. Basically, what you have to do is noindex them first using the robots meta tag, noindex, follow noindex, nofollow. noindex follow obviously keeps passing link equity through those links, even if the page has noindex. I normally tell my client to use noindex follow and then we basically monitor indexation of that section of the site. You have a separate search console profile setup for that specific section of the site, monitor how many pages are indexed, and when that’s gotten down to a reasonable level—it doesn’t have to be zero—if we go from a million index to 20,000 index, something like that, then it’s like, “Okay, that’s good enough. Let’s take care of this now.” Then we’ll block in robots.txt.

Also, I think this is important distinction here to note is that when you have a noindex follow meta tag, that theoretically should drop the page out of Google’s index and it should continue to pass link equity through the links that are contained on that page, even though that page isn’t going to show up in the search results. However, Google has said that they treat a noindex follow over the long term as a noindex nofollow. Have you seen that?

Yup. I’ve heard that before. I’ll be honest. I’ve never really looked for it myself because I don’t want to leave noindex follow in place super long term because it’s usually not the right fix for what I’m trying to accomplish on my client. I haven’t seen that. It’s kind of similar to 302 redirects where 302 redirect, temporary redirect, and the search engine say and we’ve also seen through a lot of testing in the search engines, that it doesn’t pass link equity. If you have 10 links pointing to one page and no links pointing to another page, you 302 redirect one with 10 links to the one with no links. Those 10 links don’t count towards that link equity, does not count towards the new page’s ranking. I’ve also seen where if those 302 redirects were left in place long enough, I’m talking 12, 24 months, sometimes even longer, then they’ll start reading them, treating them as permanent redirects and Google says that the link equity will be passed. I prefer to take my own results into my own hands and do the proper redirect but it seems like the search engines had tried to put this in place but once again, it takes 12, 24 plus months, and I don’t have 12-24 months to wait for these links to count them.

Actually yes, Google did used to say that 301 redirects were the only redirects that pass PageRank but in 2016, Gary Illyes from Google, who is Matt Cutts’ replacement essentially, said 30x redirects don’t lose PageRank anymore. In other words, all 301, 302, 307s pass PageRank.

Yeah. I don’t believe it. I haven’t seen evidence of that. I know he said that but I haven’t seen the evidence of it, to be honest with you.

Okay. Well, the really interesting conversation I had with Christoph Cemper from Link Research Tools on this very show and we talked about the power of 302 redirects. He did a study finding that 302 redirects were actually preferable to 301s, and that they passed the rankings benefit for a long-term period of time indefinitely. Whereas the 301 redirects only pass the rankings benefit for relatively short period of time like weeks or months at best. That’s very interesting when you think about maybe the link equity in terms of PageRank is still passing. But there are other aspects of the rankings benefit that a link transfers that may or may not continue. That includes, for example, the context to the link—the anchor text. The hypothesis here that Christoph is making, and I tend to believe him and I’ve seen in anecdotal situations to actually be beneficial, imagine that the rankings benefit includes the context, the anchor text, and the PageRank, the link equity, and the link equity transfers with both 301 and 302. With the 302, the anchor text benefit actually continues to pass longer term than with a 301. It evaporates overtime with a 301. This is what Christoph found and we talked about this at length in the episode. What this means is that, a 302 redirect could actually be more beneficial for SEO in certain use cases; not if I want to clean up a lot of duplicate content. Certainly, if I’m doing that, I’m using 301 redirects because 302s have the side effect where the old URL still shows up in the Google search results, and that could be very problematic. If I’m trying to collapse a lot of duplicate content URLs and I don’t want to see those showing up in the search results anymore, I need to use the 301, not the 302. However, let’s say that I’m acquiring another company, and they have a website, and I want to 302 maybe, instead of 301, transfer the link equity and the rankings benefit from the acquired website to my main website, then a 302 can actually be more beneficial. I actually had one of my clients take one of their acquired websites and switch it from a 301 to a 302, and they did see a benefit. Kind of mindblowing when you think about it. But this is the sort of stuff that I think just underlines the importance of always testing, not believing any particular tweet by any particular Googler, and not believing any SEO expert. Frankly, just testing. Testing, testing, testing, and things change. This seems to me like a loophole that the 302 actually being better at transferring the context of the link long term. Will probably get closed as far as that loophole if a lot of SEOs switch from using 301s to 302s. That would be my guess but I think that most SEOs are so conditioned to always use 301s, I doubt that we’re going to see en masse adoption of 302s by SEOs and thus, that loophole will continue to stay open.

I haven’t listened to Christoph’s episode. I know Christoph’s super smart guy. Honestly, he’s one of those that when it comes to links and redirects and that sort of thing, he is one of the few people that I really, really trust and know that he is always testing. Someday, I will have to go take a look at that but it does seem it’s a pretty specific use case in terms of if you acquire another brand and then you try to move those rankings over, whether it’s brand in searches and that sort of stuff, move that over. But as you said, there are all of these other side effects such as the URL stay in the index, the search engines are going to keep crawling them that you also have to take into account. I think your caveat of, if you’re trying to clean up duplicate content, you’re trying to clean up your crawl, your crawl budget, get the search engines to focus on the right pages, then a 301 redirect is the proper way to go. Once again, there are very specific times, there are use cases where you should use a 302 instead of a 301, and when you should use a 307 instead of a 301. It’s all things in their right place. I’m definitely not going to say that you should only use a 301 ever if you’re redirecting a page. Not the case if the page is coming back, use a 302, that sort of thing. 307s—those server-side cache or browser cache. Those, I personally haven’t tested, I haven’t seen what it does versus the 301 but there are definitely times you use these specific redirects other than the 301 but I would also say, if you’re not an SEO expert and this is really your business is at stake, I would say don’t play with fire. You may disagree, Stephan, but I would say don’t play with fire. Use the 301 redirect when you’re redirecting especially old pages to new pages.

I would agree. If you’re not savvy about SEO and you don’t have an SEO expert advising you, it’s safe to stick with a 301. I found the reference to Google saying, “Long-term noindex will lead to a nofollow.” That is John Mueller who explained that in one of the webmaster videos. It was written about on Search Engine Roundtable in Barry Schwartz’s website. Again, underlying the importance of staying on top of stuff including the latest things that Google engineers and Google representatives are saying, as well as testing everything and making sure that what they say actually jives with reality because that’s not always the case.

Yup, exactly. I definitely pay attention and Gary, John, and Danny Sullivan now, those guys are fantastic and they give us a lot of good information, but they’re also a part of Google’s PR machine. I listen to them, I know those guys, and I trust them. I trust that they’re not intentionally misleading us but at the same time, a lot of them aren’t actually doing SEO on their own websites, so we need to see what actually works in the wild. As I said, I don’t distrust them, I actually trust those guys a lot, but it’s always important to take the advice that they’re giving and see how it actually interacts in the wild once you actually put it into practice.

Yeah. I think the expression is trust but verify.

Yeah, that actually is a very nice way to put it.

Okay, let’s distinguish crawl budget from index budget. Have you heard the term index budget being used? I rarely hear that ever being discussed but I hear crawl budget.

I don’t think I’ve heard index budget before, to be totally honest with you. I would love to hear your explanation of it.

It’s really not different from crawl budget in the concept that you have a certain amount of equity that you are assigned to your website, and once you go past that, Google’s not really interested in going any further from your website, so crawl budget, that has to do with crawling. Google bot will get to a point where it’s had enough. Maybe you have 10 million pages and hardly any links pointing to your site so maybe hundreds of thousands of pages get crawled and that’s it because you just don’t look like a trustworthy website—hardly anybody links to you. Index budget, same concept as I said, except that relates to the index. You’re using a crawl budget in terms of the way that you are interlinking pages of your site, the XML sitemaps, and you’re maybe noindexing a bunch of pages which still have to get crawled or still using crawl budget. But then, let’s say that you are getting a lot of pages that are low-value pages to get indexed that’s using up index budget, all pages that are getting indexed or using up index budget, you’re not using index budget when you’re noindexing. You’re using a crawl budget but not index budget. You see how it actually be beneficial to Google to distinguish those two and say, “Well, sometimes, crawl budget is being used when things are getting crawled but not indexed due to the noindex meta tag, and sometimes, pages are in index but they’re not getting crawled anymore, because of the disallow directive,” and there is no reason that Google can find to actually drop that page from the index so it’s still showing up in search results, it just has that message in the snippet part of the search listing saying that this page doesn’t have any information about it, there’s little information about–I forget what the latest messaging is that Google uses when a page is being disallowed–but it’s still showing up in the search results.

I got you. That’s basically when a page is blocked in robots.txt but Google hasn’t dropped it out yet so they show that no information is available for this page or whatever text that is.

Correct. It may stay there forever. That page will probably stay indefinitely until you noindex. This is another rookie mistake that SEOs make. They might fix the noindex, add that as a meta tag, and then they don’t think to remove the disallow. Google never finds the meta tag because it’s been disallowed from visiting the page and seeing any meta tags.

Exactly. The meta tags’ there but it’s never crawled. Same with canonicals. I see people do all the time. They’re like, “I want to canonical this page to this other page but I’m also going to block it in robots.txt.” And then they’re like, “Why isn’t this page dropping from the index?” I’m like, “Because the search engine’s not crawling it. They’re not seeing that page because of that robots.txt block.” The noindex tag and canonical, those are scalpels. They’re very specific directives versus the robots.txt, it’s a sledgehammer. You’re completely stopping the search engine from going to that section of the site. But a sledgehammer always trumps a scalpel.

I tend to connote the URL removal tool in Google Search Console as the nuclear button.

Yeah, totally.

Although it is only temporary. It’s 60 days or something like that. I rarely recommend that a client use the URL removal tool. They should be setting things up properly with 301s, or the 404s, or the canonical tags, or whatever is the correct long-term approach and not trying to get a short-term fix with the URL removal tool.

Totally agree.

Let’s move on from this super geeky topic of canonicals, and 301s, and all that–we’re probably making people’s brains hurt here–and switch to a fun topic that can also be important for enterprise SEO and that’s link building. How do you do that for a large-scale website with millions of pages? Do you try and get most of the links coming to the home page or to top-level pages at least like category little pages or you try to get deep, deep links to many lower-level pages and maybe thousands upon thousands or tens of thousands of these pages?

Yeah, that’s a great, great question there, Stephan. I think that like most things in SEO, it depends. My hope is that when a website has millions of pages on their site that are also indexed that the sites can be pretty strong already, it’s going to have whatever you want to call it. Domain Authority—everyone has their own metric of how strong the website is—Moz’s metric is still the industry standard and it’s not the metric to measure success off of by any means at all. It’s a directional metric into how strong a website is.

Is that your preferred metric for link power or link equity?

I would say it’s the industry-accepted one for the most part. Other brands have tried to have their own. I know that Christoph’s tool, for example, they have their own metric. Majestic has their own metric. Ahrefs and SEM, they all have their own metric. But Domain Authority is the one that I use because it’s the one most people understand the best or at least are familiar with. That’s the one that I look at, just as a quick license check, “Okay, how strong is this website?” and then you have to dig deeper. That goes back to the question of do you build things to your home page? You build your category pages? Do your skews? Do your subcategories? You know you’re three levels deep, your sub-subcategories what is it? It depends on that site. It depends on your competition. It also depends on where you need more links as well. A lot of SEOs I would say in the past, were able to use links, honestly, as a fix for not having a good information architecture and not having good technical SEO. It’s just simply not possible. Ranking factors have expanded, and ranking factors that work well have expanded as well, and links are very much, I would say, still the strongest ranking factor but search engines take into account site speed, length and in-depthness of content, and all those things as well. We can’t just look at links especially on large websites. It’s just simply not possible to build links to tens of thousands of pages, it’s really not. There are a lot of links coming to the home page because of PR and now we built up this information architecture for example, where now we have these pages that are way closer to the root, way closer to the home page. Our competitors are outranking us because maybe their website—overall their Domain Authority isn’t as strong—we can get to talk about Page Authority, like their yellow shoes page has more links and has a higher Page Authority than our yellow shoes page. If we want to be competitive, we need to bring up that Page Authority. Building links to that specific page or to a category page which then you build more links to a category page using your information architecture, it trickles down to your subcategories which have less volume, they are also less competitive so you can rank better. Really, it depends on the individual website and what you need to do to rank those sections of the site and specific head terms as well.

Page authority depends on the individual website and what you need to do to rank sections of the site and specific head terms. Share on XAnd just to clarify for our listeners, Page Authority is another Moz metric. I would agree with you that Domain authority and Page Authority are more industry norm type of link metrics that are usually the ones that are about. I used to not use those two metrics until recently when Moz sunsetted the Open Site Explorer tool which has been really bad frankly, for years and replaced it with Link Explorer.

And they would agree with that.

Yeah. Link Explorer is a complete revamp of Open Site Explorer. Much more robust, much better algorithms, and crawl of the web and everything, and the metrics are better. Now MozTrust and MozRank are not better. Those metrics we need to disregard. But Page Authority and Domain Authority are better now. I concur with you that those are good metrics to use but I would have disagreed with you six months ago.

Totally yeah. I think the challenge with any of these metrics, Domain Authority, Page Authority, etc is for a long time, they didn’t do a good job of explaining them and explaining this one. What is Domain Authority? What is Page Authority? They tried to but you also couldn’t see the trends, you couldn’t see the trends over time. Also, in relation to the web because these metrics is moving and I think I know a lot of marketers, a lot of SEOs sit themselves to their service because they’re basically selling improved Domain Authority. I get people come to me at Credo where they’re like, “We need to improve our Domain Authority.” I’m like, “No. We need to improve your traffic, your business, and grow your revenue, not improve your domain authority.” They think improving their domain authority is going to get them more business. I’m like, “You’re measuring the wrong things here.” But I totally agree that the metrics are a lot better now. They did have an issue recently where they rolled it back. I believe it was last week while we’re recording this near the end of June. They actually rolled it back last week because there’s basically some junk in their crawl of the web. They dramatically increased the size of their crawl as well. They’re dealing with some of the scale problems and they actually rolled it back simply because they had a lot of junk in there from different sites like Tumblr and that sort of thing, which were basically skewing the metric across the web. They are very much still working on it. We need to keep all these caveats in mind. If you’re working with clients, you also need to be educating them about that. But I do think it’s useful. It sounds like you agree to have a metric like this where we at least can take a look and get a directional idea on it and have a common place to start conversations.

Yeah. One thing I think is missing though with Domain Authority and Page Authority is the trust component. Moz Trust was a way of looking at the trustworthiness of a particular website. But now, that metric is not being updated or revamped with the revamp of Open Site Explorer to become Link Explorer. That is a problem I think is a gap. It’s one that Majestic doesn’t have a problem with. They have Trust Flow and Citation Flow. Citation Flow is essentially an approximation of PageRank and Trust Flow is an approximation of Trust Rank, and that’s I think quite valuable. We also have that Link Research Tools. We have LRT Trust and LRT Power. Power being the importance and Trust being the trust component. It’s really important for us to understand that trust is different from authority. It’s different from importance, from power because if you have very few trustworthy websites that are linking to you, you could have a lot of important sites but you’re still going to lose.

Totally, yeah. I actually did not know that they are not updating Moz Trust with this update so that’s really good to know.

Yeah. Moz Trust and MozRank. Rand was doing, I forget if it was a blog post, or a video, or something, talking about the benefits of Link Explorer and one of them is that they fully revamped Domain Authority and Page Authority. Those two metrics do have trust baked in but they’re not separated out. I don’t know how trustworthy one site is, one domain is to another in comparison. It’s all grouped together. Same thing with the Ahrefs. You have DR and you have UR. Domain Rank and URL Rank; URL at the page level, DR at the domain level. I can’t see any distinction or distinguishing of trust versus importance. That’s why I’m always going to Link Research Tools and to Majestic for my trust metrics and not just relying on the metrics from Moz or from the Ahrefs.

Totally. I think that’s super wise. We have all these different tools at our disposal and we all subscribe to all of them. We all can see that there’s these different numbers. I think it’s important to take all that into account with the different ones and base it to say, “Why are these two different?” and maybe use Domain Authority when you’re speaking with your client and you’ve educated them about what it means and what they should really be thinking about when you talk about it. But as you said, maybe use the trust metric from Link Research Tools or something like that. There are very smart people working on all of these tools and no one has it exactly nailed.

How many tools do you have that are normally part of an engagement? Are we talking dozens or do you have a go-to six or seven tools that you always use?

Yeah. I have my go-to tools for sure. I do still use Moz. Their new Link Explorer is fantastic. I still set up a campaign for all of my clients and track some rankings in there. When I’m auditing, combination of SEMrush and Ahrefs, I subscribe to both of those. For crawling, I use a combination of Screaming Frog and DeepCrawl. I recently just started using Sitebulb as well which is fantastic.

What makes that so fantastic?

Sitebulb is similar to Screaming Frog in that it is a browser-based crawler. It uses your computer’s RAM. You can also throw it on an Amazon AWS instance or something like that and crawl from there. It is CPU-based and they crawl similarly to Screaming Frog, as well as I’m aware. Basically, Screaming Frog gives you a lot of data, which is fantastic, and you can take that, you can put that in Excel, you can slice it and dice it anyway that you want. Sitebulb gives you a lot of more graphs and basically puts issues into different buckets—very similar to a Deep Crawl or Botify. They don’t just give you the data, they give you the insights as well, so depending on the user, the person using it, that can be super valuable as well. There’s a lot of same data. You can still pull the same data out of Sitebulb but they also give you a lot more visualizations and that sort of stuff, which makes it easier. You don’t have to take the data, put it into Excel, create your own charts, that sort of stuff. You can take screenshots of the charts within Sitebulb, pop that into a client deliverable for a point that you’re making, a recommendation that you’re making. Once again, I use all of them and they all have different features that are fantastic. For example, Screaming Frog, if you need to build a focus site map, a section of the site that you’ve noindexed and you want to build a site map for that section of the site, so you can submit it, search engines go back, crawl it quickly, and then drop those pages and you remove that sitemap. Screaming Frog has an amazing feature for that. Once again, different tools for different needs.

Yeah. They also have a great log analyzer which is a completely separate tool.

They do. It’s fantastic.

Screaming Frog is really great. Okay, I interrupted you in the middle of your list of favorite tools. What else you got?

Let’s see. Those are the main ones that I use.

What about keyword research? What are you using for keyword research?

Keyword research, a combination of SEMrush and Ahrefs. SEM and Ahrefs but, especially on a larger, more established website, then Google Search Console is a good place to start as well and basically trying to get as much data as I can from all these different places.

How about Moz Keyword Explorer? Are you using that one?

I don’t use that a ton, to be honest with you.

I would try that one. Give that one a fair go because I think it’s an excellent tool.

I used it off and on and I know I actually really liked it. Russ has done a fantastic job with that with jump shot data, actual fixing data which I’m sure they have spent a ton of money on. The interface is definitely a lot better. It’s a lot easier to use. But to be honest with you, when I’m working on these websites that have hundreds of thousands, if not millions of pages in the index, I find that the scale of data and the way Ahrefs and SEMrush are set up, it fits me better, but I definitely used Keyword Explorer with Moz and building more focused keyword list and that sort of stuff. I found it to be useful for sure. I love how you can sort down to questions and that sort of stuff as well in order the basically categorize your keywords as well—transactional versus informational sorts of query. It definitely has its really solid features as well. As I said, I use it. I use all these different tools for different things.

What you’re saying is you’re using for keyword research on large-scale websites, SEMrush and Ahrefs. I’d also throw Searchmetrics into the mix there too. It’s excellent for large-scale keyword research pulling hundreds of thousands or even millions of keywords from a competitor’s website, just like SEMrush does. It’s quite good as well.

Totally. I haven’t used them in years but I know their tools is super solid.

And they’ve really innovated, like their Topic Explorer, their Content Editor, the whole content suite, content experience is amazing. So anyway, let’s go back to the link building.

I’ll take a second look. I’m sure Marcus will be happy to hear that you gave him a good plug as well.

Yes, and I have actually interviewed him on the show too. That was a great interview.

Yeah. Great guy.

Yeah. He is awesome. Okay so back to the link building. I took you on this huge detour to talk about tools. Thank you for that. What do you tell a client who says, “I need to rank for insert whatever head term, trophy term that they’re obsessed about in the blank there,” and it’s a category page that would be most appropriate or maybe even a subcategory page that would be the appropriate page to try and rank for that keyword, and you could either link, build to the home page and then send some link equity directly to that page, maybe make that a featured subcategory or something featured on the home page or you could drive some link equity directly from deep links to that category page or subcategory page. What would you do? What would you recommend if you’d pick one or the other?

Well, first I would push back and ask them and ask the data if that is indeed the most valuable term that they can rank for. As you and I both know, a lot of executives say, “Oh we need to rank for this term,” and I look at the data and I’m like…

Yeah, nobody’s searching for that.

Yeah. No one is searching for that or it’s definitely not the highest volume or looking at their own internal data. It’s not converting very well. You know that even if you get them ranking for it, they’re still not going to be happy. We have a duty to our clients to actually show them the real data and help them make better decisions. Sometimes they’re going to come back and say like, “No, we just want to rank for this thing,” in which case you decide, “Do you want to do that or not?” We, as consultants, we can also pick our clients which is good pending on how well educated they are and whether it’s the kind of work you do best, that sort of thing. Let’s assume that it is one of the best terms that they can rank for. It has good volume, it converts well, and the question comes to, “Do you build links to the home page or do you build links to the category page, home page that then trickles the link equity post down to the category page or build links directly to the category page?” I’m talking on broad strokes and generalities here, I prioritize building links to that specific category page because usually, the competition has links to that category page and so they’re going to be ranking better than you because of that. We’re basically trying to become competitive there. If we’re just focusing on one specific keyword, building to that page at that we’re targeting that keyword on. But like I said earlier, that’s really how I work.

Okay. I totally agree with you on that. I think that’s a more impactful strategy and you look at many of these websites that are large-scale marketplaces or large-scale e-commerce sites, and the category pages suck. They’re not link-worthy. They’re just a list of product thumbnails, product names, prices, and buy now buttons. What do you do to make that a link-worthy page?

That’s a million dollar question right there, maybe literally. I’ve seen people building out longer text-based guides on their main category pages. Some of them will have videos, they’ll have category-focused videos. The thing about like an outdoors website, like an REI or something like that, they have a huge catalogue, they have a whole learn section that ranks really, really well for all these like, “How to pick a backpacking stove” sort of queries. They should put that video onto their backpacking stoves category page and basically help people on that direct page.

It’s funny you actually mentioned REI because they were a client of mine a while back. They went against my advice and went from having a category-level page of snowboarding and a category-level page of skiing, like skier, and they made a snow sports category, they demoted the skiing and snowboarding to a subcategory level against my advice because then it lowered the link equity that these pages were inheriting.

Interesting and was there a negative effect there?

Oh yeah, they ranked poor for those keywords, not surprisingly.

Totally, yeah. When you’re building information architecture, when it comes to SEO, a lot of us in the industry and a lot of people just talk about rankings and traffic and that sort of thing, but also what’s right for the user there. Does snow sports makes sense as a category page? Maybe it does, but then you also have to take into account that if you move these pages down a level in the architecture, you’re probably going to take a hit on rankings and traffic. How do you mitigate that? Sometimes just building links. Sometimes just building internal links, speeding up your pages, there’s a lot of things that you can do. It’s never a straightforward as category or subcategory, what page should this be on or what level should this be on, but you have to take into account, as you just said, your ranking’s my job if you do this. It’s our duty as consultants to educate our clients about that and show them examples of where it’s happened before and help them make the right decision. Ultimately, it’s the business owners or whoever’s decision there. Get it all on writing, put it all on writing. Don’t just do it on a phone call. Put it in writing as well. If you say it on a phone call, follow-up with an email so you can play back. They’re like, “Why did these rankings drop?” “Yeah. As I told you in this email on April 16, 2015, if you do this, this will happen.”

Yeah. Another thing too that I didn’t love about that approach that they took is, I don’t know about you but I don’t normally talk about snow sports. I’m a downhill skier and that kind of terminology is not something I use. Just like I had Coles as a client for a while years ago and they were fixated on the term kitchen electrics as a keyword they want to rank for. Yeah, nobody searches for that, nobody even uses that word, nobody even knows what that means. It’s a small kitchen appliance like a countertop type of appliance, toaster, food processor.

That would have been like Zillow. I believe at Zillow they called their individual listing pages, PDPs, property detail pages. They’re trying to rank for property detail pages. It’s like, “What? No one’s searching for that.” They would never do that, they’re fantastic at SEO, they’re a fantastic team over there. But it’s that same sort of my uptake is just like a company, specific thing or is it something that you’re users are actually searching for. Same thing as this is the solution that you have versus this is the problem that you’re solving. We’re talking like a SaaS company or something like that.

For sure. Alright, last question for you. If a listener is looking to hire an SEO consultant like yourself or myself, or maybe even an agency, and then they want not just the advice but they want somebody to do all the work to implement everything, as much of the work as possible. Some of it they might need their web team to implement or their systems administrator, whatever, but a lot of stuff they’re just going to want the SEO agency to do. What would you advice a listener to do to avoid getting snookered by an agency and hire the right agency, the right fit for the right price?

That’s a great question. Maybe a shameless plug but basically that’s what I do. I help businesses hire the right agency or consultant for their specific needs. Talk to me. I would love to help you out. I’ve helped 1500 plus businesses over the last few years do that—gotten pretty good at it. But there are a lot of different questions you can ask. First of all, you need to figure out do you need a consultant or an agency? If you need content to be implemented, you need links to be built, you need technical fixes done on your website–depending on the platform that you’re on–if you’re on a WordPress website, a consultant might be able to use some of that, depending on what the scale or changes is, but if we’re talking about building out new post types and that sort of stuff, SEOs are not web developers. Also, web developers are not SEOs. Most SEOs are not web developers, let’s put it that way. You need to understand basically, the limitations of the people that you’re hiring. Basically, what I say is if you need strategy and you someone to help you out with the things you’re implementing yourself on the side of your business, a consultant is fantastic. Some consultants will have freelancers they work with, agencies they white label content work to or link building work to, that sort of thing, web developers they work with. They need to ask those specific questions. Don’t hire them and then be like, “Wait, you can actually implement this stuff on my site?” and they’re like, “No. I’m not a web developer.” You need to ask that question. If you need more hands on deck, you just need more people helping you implement things, or you have multiple channels that you need done; we’re talking SEO, we’re talking potentially AdWords, PPC, social advertising, that sort of stuff, an agency is likely the right choice there. Don’t be scared off thinking that an agency is going to be more expensive than a consultant or a freelancer. Oftentimes, agencies are cheaper hourly than a consultant, and multiple studies have shown that. That’s something to keep in mind as well. And then, when you’re having these conversations and you basically figure out, “Okay we need to hire an agency not a consultant,” then basically asking them, “What is your process? What does an engagement look like? Where do we start? What are specific things we’re going to be doing?” When it comes to budget, I often ask people like, “What’s the budget you have set aside for this?” and they go, “Well, that depends on what we get.” You need to tell the client, “Okay, it’s gonna cost X amount per month and this is basically where the budget’s going to go. Some of this is going to content creation, which we do this amount per article. If we’re going to do a technical audit, which takes about this many hours, this first month is going to go to that,” and really help them understand what it is that they’re going to be getting for their spend. If you’re looking to hire someone, ask them specifically like, “Okay, you’re quoting me $3000 a month for the next six months, what’s going to be happening in those months? What are the specific things that we’re going to be working on?”

Yeah. Is there a particular price range that a typical agency will fall within, like $5000-$10,000 a month retainer or $7500 or $15,000? What’s typical of what you’ve seen?

It depends on the type of business that they’re working with. If you’re a smaller business, an SMB doing, I don’t know, let’s say $150,000-$500,000 a year in revenue, there are a lot of agencies out there that can do really good work for $2500, $3000 a month level. Some even less. It’s really hard to get really good work done for less than about $1500 a month. If someone’s quoting you less than $1500 a month, I would ask why. Again, it could be from a multitude of reasons. There are also a lot of agencies, especially the more well-known agencies in our industry–Distilled and Sierra Interactives, Portent–those of the world, yeah, they’re going to charge you. Their minimum probably starts around $6000, $8000, $10,000 a month and can go up from there. I know some of them work on multiple tens of thousands of dollars a month SEO projects. There’s strategy, there’s content creation, there’s link acquisition, there’s all of that stuff going on. Really, it’s kind of like buying a house and renovating a house. You can spend as much as you want and as much as you can, and you can also go cheap. But once again, you get what you pay for and the quality of what you paid for.

You do get what you pay for.

You 100% do. A consultant like myself, for example, my minimum usually in a very focused sort of project is $4000 a month. But once again, I’m targeting a very specific businesses. I’m not going to work with a local restaurant. They’re not going to be able to afford me, nor am I going to be able to get them the results they need because I don’t focus on location-based SEO. A restaurant shouldn’t hire me. I’m totally good saying that. But I know some very good agencies that do fantastic work for location-based restaurants. So, not only can you afford them, but do they have specific experience working with the kind of business that you are, the kind of website that you are to drive you more clients or customers, whatever word it is that you use.

Yeah, it’s all good stuff. Well, we’re definitely out of time. That was fantastic. Thank you, John. If folks wanted to contact you directly to get help with finding an agency to hire, or if they wanted to ask you an SEO question whatever, what’s the best place we should send folks?

getcredo.com is my business website and you can find me from there. I’m on Twitter as well @dohertyjf but the best spot is my business website.

Alright. Perfect. Well, thank you, John. Thank you, listeners. Now it’s time to implement some of this geeky stuff that we talked about. This is your host, Stephan Spencer, signing off. We’ll catch you on the next episode of Marketing Speak.

Important Links

Connect with John Doherty

Apps/Tools

Article

Business/Organization

People

Previous Marketing Speak Episodes

Your Checklist of Actions to Take

- Focus on keyword buckets rather than specific phrases when dealing with a large-scale enterprise site.

- Keep my XML sitemaps up to date so that Google will recognize my important pages.

- Be sure to correctly tag my pages. Overusing tags can look spammy.

- Properly index my products by determining their category, subcategory, and sub-subcategory.

- Only rank the highly important pages on a large-scale website. If a site has more than a thousand pages, chances are not every page needs to be indexed.

- Don’t allow freeform tag pages to form if I am setting up a new website or moving onto another domain.

- Create long tail keywords. Over time, long tail keywords have a ton of search volume and high conversions.

- Don’t use robots.txt when taking pages out of index. I should noindex them first using the robots meta tag, noindex, follow noindex, and nofollow.

- Use the 301 (not the 302) if I’m trying to collapse a lot of duplicate content URLs and hide them from search results.

- Make it my duty as a consultant to educate my clients about making the right SEO decisions. Show them examples of how to avoid mistakes and how to make them right.

About the Host

STEPHAN SPENCER

Since coming into his own power and having a life-changing spiritual awakening, Stephan is on a mission. He is devoted to curiosity, reason, wonder, and most importantly, a connection with God and the unseen world. He has one agenda: revealing light in everything he does. A self-proclaimed geek who went on to pioneer the world of SEO and make a name for himself in the top echelons of marketing circles, Stephan’s journey has taken him from one of career ambition to soul searching and spiritual awakening.

Stephan has created and sold businesses, gone on spiritual quests, and explored the world with Tony Robbins as a part of Tony’s “Platinum Partnership.” He went through a radical personal transformation – from an introverted outlier to a leader in business and personal development.

About the Guest

JOHN DOHERTY

John is the founder of GetCredo.com. He’s helped over 1500 businesses find a quality digital marketing provider to work with. He’s personally consulted on SEO with some of the largest websites online.

Leave a Reply