If you’re a faithful listener of the show, you probably remember the remarkable episode from a few weeks ago with Christoph Cemper. In that episode, we discussed analyzing your link profile, different types of redirects, and much more. Christoph had so much wisdom to share that we decided to do a part 2 so he can bring you more of his incredibly useful knowledge!

Christoph created the remarkable LinkResearchTools, an amazing toolset with lots of incredibly useful features that I use frequently. In this second half of the conversation, we spend more time digging into 302 versus 301 redirects. We then go into depth about what to do if you’ve received penalties (whether algorithmic or manual) and how to perform an effective cleanup process. In the lightning round at the end, Christoph offers even more amazing value, including a chance to win a free spot at his upcoming training event in Las Vegas.

In this Episode

- [01:15] – Stephan starts things off with a quick recap of what he and Christoph talked about in the first part of their conversation. If you missed that and want to hear it, check it out here!

- [03:19] – Christoph picks up this episode where we left off with the previous part of their conversation, exploring the differences between 302 and 301 redirects.

- [06:12] – On average, how long does it take for 301 redirects to lose their ability to pass on rankings benefits?

- [08:31] – Christoph clarifies how the behavior of a 301 is exactly the opposite of what you would expect (and want) from a permanent redirect.

- [09:48] – Stephan restates what Christoph has been saying to make sure it’s clear for listeners.

- [11:32] – The test that Christoph did on 301 versus 302 redirects was a small-scale test, he explains, and talks about its scope.

- [12:16] – Stephan talks about a client he had who had used a 301 redirect. Switching to a 302 gave the client a rankings benefit.

- [15:25] – If you got hit with a penalty, whether manual or algorithmic, what is the procedure for cleanup?

- [19:38] – Stephan pulls things back for a moment to clarify and simplify what Christoph has been saying to ensure that it’s clear for listeners.

- [21:53] – The idea of link risk management is critically important, Stephan reveals, and explains why.

- [23:32] – Christoph talks about a potential threat to sites in which someone sends out emails asking sites to remove good links to your site.

- [27:01] – What’s the difference in process if you were penalized with manual action versus an algorithmic penalty because of links? As he answers, Christoph goes into great depth about analyzing links by risk.

- [35:22] – You can apply your own knowledge to the anchor text, Stephan explains, and discusses the importance of using Link Detox (DTOX). He and Christoph then discuss diversity in links.

- [38:09] – Does Christoph think that the risk of manual actions is higher now because of real-time Penguin?

- [40:00] – Stephan brings up the rest of the cleanup process once you’ve experience a penalty, whether manual or algorithmic. Christoph then walks us through this process.

- [44:48] – We learn more about Pitchbox.

- [45:36] – Christoph discusses what to do next in the process of link cleanup and removal.

- [49:10] – Stephan talks about what happens once you’ve created your disavow list for Google.

- [52:37] – We move into a lightning round. First, Christoph talks about expired domains and how page rank is affected.

- [55:28] – If you acquire an ongoing business and don’t want the page rank to reset to 0, what are some things you can do to decrease the likelihood of that happening?

- [57:27] – Christoph talks about whether you get special credit for links from .gov or .edu sites.

- [60:00] – Does Christoph have a browser toolbar that he wants to plug for giving data on power and trust? You can find the tools he discusses in his answer, and many more, at this link.

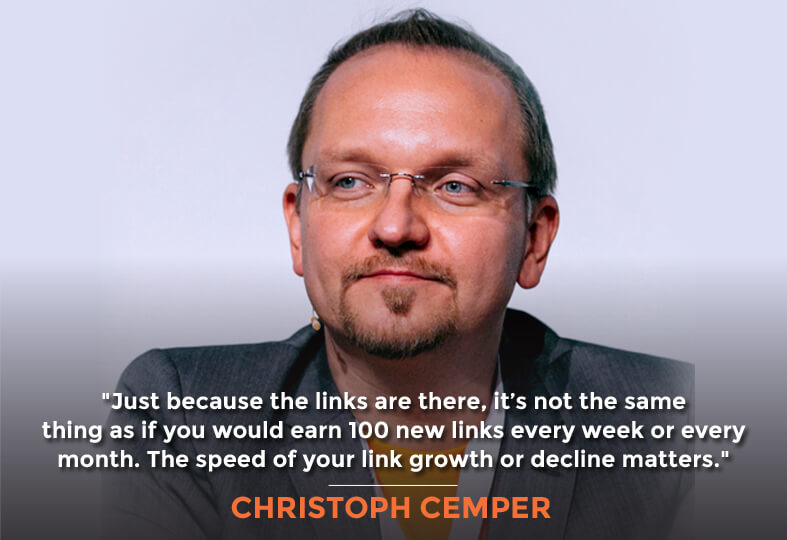

- [62:57] – Christoph explains why link velocity is an important thing to measure.

- [64:40] – Stephan talks about an article he wrote that goes through some of the most important tools in the LinkResearchTools toolset.

- [65:22] – Why should we look for hubs? Christoph explains that having good links is the equivalent to hanging out with the cool kids.

- [67:38] – Christoph talks about his LRT Associate Training program. He also offers a free spot at the upcoming Las Vegas training event to one lucky Marketing Speak listener, and offers details on how to win it.

—

Transcript

Hello and welcome to Marketing Speak. I’m your host Stephan Spencer, and we have Christoph Cemper again for part two, Link Building Secrets of the Masters. I’m super excited to do a two-part interview with Christoph because he is a genius at link building and link analysis. He’s the founder of Link Research Tools. It’s such an amazing toolset that includes Link Detox and Competitive Landscape Analyzer, Backlink Profiler, so many amazing tools, Link Alerts, etc. I use it all the time. Welcome, Christoph.

Hey Stephan, thanks for having me again. I’m proud to be one of your multi-series interviews.

I think you’re maybe the only one so far.

Yet.

That’s right. We have a lot to talk about. We only got halfway through the interview so we are back. Let’s just do a quick recap. We talked about what a presell page is, PBNs, Private Blog Networks, they’re dangerous. We talked about the footprint that Google can pick up on if you are not squeaky clean. We talked about things like if you are a repeat offender, you have much greater chance of getting nailed. We talked about the differences in Penguin before 4.0 and now Penguin 4.0 in terms of does it truly just devalue links and not demote sites now. We talked a little bit about a case study you’re working on for Hertz and how this is a high-risk sort of situation still. It’s not that the links are not going to hurt you, that does not count. We talked about the danger of paying too much attention to PA and DA, Page Authority and Domain Authority, those are easily gained metrics. We also talked about 302 versus 301s and that bombshell you dropped at SMX East saying that 302 is actually better for SEO. We talked about a lot.

I would just say, that’s a lot to write.

Yeah, we definitely dropped some knowledge bombs. First of all, let’s pick up where we left off on the 302 versus 301s because that is such a critical issue and I think some folks were confused by the powerpoint deck that you had uploaded to SlideShare that you presented at SMX East. What are these orange lines and the blue lines? What does that mean? Let’s pick up there and then I’ll ask you some new questions. So the 302 versus 301, 302 is better now. What gets transferred with a 302 that doesn’t get transferred with a 301 or stops getting transferred after a period of time?

Rankings. My test was isolated, to try to answer the question, what type of redirect do I need to use to of course, preserve my rankings? What type of redirect kicks in the fastest and stays the longest? Just because the A to the B spec calls the 301 the permanent redirect, doesn’t mean Google implemented it like that or kept it like that for 20 years. That’s 20 years of old specification. But the question was which type of redirect will give me the best benefits? When you think about benefits, you must be aware that there are people who use redirects to redirect pages to other pages to get those rankings, sometimes buy websites, whole websites, and then redirect the important pages for their business, for their money keywords to their actually money site. Of course, Google calls this manipulation, they might even call it blackhat. I don’t know. In the last years, it seems like everything that SEO was, everything that we did to try to improve our rankings, has been labeled as not okay.

Manipulation.

Manipulation. But in my opinion, basically, that what’s SEO is about. SEO is not just following Google guidelines which are important to follow by, on one hand, but then you want to take it extra. You want to ask them extra questions. You want to go beyond what everyone else is doing. These are redirects. A simple application for that would be just to redirect all your traffic and the links that got you that traffic to your website. For example, we got the old agency website, cemper.com. We still have it there but a lot of the content on there was about Cemper and me. When people see me online, in a video, in a podcast like here, in an interview, they might Google my name. The last thing I want to show up is that all agency website so redirecting the home page to my target which is Link Research Tools, my company, my business, my solution, to increase traffic for websites, people should find that. It’s basically a brand migration also. People change the brand name, then of course they still want to be found for the old brand name. The shocking result of the many months of testing was that I saw 301 redirects lose the ability to pass on these rankings.

After what time period? How many weeks, how many months on average?

It was merely two months. It was a long term test where I did multiple tests. It was basically after two months. Not only did the 301 take a little bit longer to set on, to actually pass it on, it just stopped doing that. There were some glitches in between where it didn’t work and then after a couple of weeks it worked again. But basically, to me, it looked like you put this thing in place, you wait for the effect and then all is good. Then it looked like the power went away which is interesting because at that point in time, after two months, you don’t really check on those keywords. Even if you do, you might see a drop. You might not make that redirect be responsible for it. My theory here is that because the 301 redirects, the so-called permanent redirects are not so permanent after all. But by using them, it’s just like meet the text, meet the keywords, we give Google the information, we want this to be permanent in your system. Because we tell Google our intention for this to be permanent, Google or the Google coach, the engineers, the software behind the Google Search engine, can do something with that information and apply some timeouts, apply some dampening filters that we all have in Link Analysis. A lot of patrons talk about damping filters to fight link manipulation. Link manipulation is just the same thing as redirect manipulation. All of these off page factors can be used to manipulate, to improve, to change rankings. It’s in Google’s interest, of course, to apply some kind of filter, some kind of delays, damping filters to whatever SEOs use to manipulate rankings.

Let me just get this straight. You’re saying that after a couple of months, it’s not unusual for a 301 redirect to not be passing the rankings benefit? Where does that go? Back to the source page? It goes from the target page back to the source URL and that one shows up in the search results instead?

Exactly. Actually, it’s the exact opposite of what you expected from a permanent redirect.

Yeah, and what you want.

Exactly, what you want, yeah. The setup was a very live setup with artificial keyword phrases where the only keyword phrase that I used only existed in one page on the whole web. I checked it with Google, I set up a very fantasy word for that and it only existed on this one page. Therefore, I could measure, if it actually shows up, if the autopage that it redirects to shows up in the results for that, this fantasy word was not in the content, it was only in the link or in the redirect. When we talk about links, you put that keyword into that phrase. In this case, we actually had the link going to that page, give that page the relevance for that keyword. Actually, what we did test is we went from a page to another page on one side with a keyword and looked if that page that started ranking for it of course could be transferred on that ranking. It’s actually one indirection more because redirects are indirections here basically. I know this sounds confusing. Sorry for that.

We’ll restate some of this to make sure that our listeners get this. You made up a word that didn’t appear on the internet anywhere. You had a page that you were redirecting to that page and you had a link to a source URL where the redirect was located and the anchor text included the fantasy word you made up.

Exactly.

What needs to happen is that fantasy word gets associated with the page it’s being linked to but because there’s a hop there with the redirect, you have now an additional variable in the equation. If it’s a 301 permanent redirect, that rankings benefit for that fantasy keyword is not stably long term getting associated with the new target URL that you’re redirecting to but it does with the 302.

Exactly.

Okay, good. I’m glad that I was able to restate that for you.

Yeah. When you think through these things, you must be very careful. Of course, everything would be easier on a whiteboard than to draw up and actually planned on documenting that in some charts. Especially on short sessions like SMX East, that was just I think a 15-minute slot to explain all that and some more tests. I can totally understand that.

It’s a complex concept especially when you’re taught something completely different forever.

Exactly.

What size of the data set was this? Was this a small scale test or a large scale test?

No, it was a small scale test because setting up all the domains that we redirected to and setting up all these pages, of course we had a handful of different phrases, it was not just this one phrase but we had a handful of different phrases, handful of different domains, and the interesting part here is that we try to basically isolate all of these from each other as good as possible. It was not just one fantasy word but basically a set of words that we dragged separately.

The bottomline for our listeners is that they should all be switching 301s to 302s, pretty much across the board, right?

Right.

I think I stated this in the part one interview that I had a client who have acquired a site maybe six months or a year prior to your bombshell announcement about 302s. They have redirected via 301 that site they acquired to their main site. They bought this company, then rather than keeping that site as an ongoing concern, they migrated all the content in 301, redirected everything to their main site. I had them switch the 301 to a 302. Even though it had been six months to a year that they’re already been running the 301 from this acquired site, they switched it to 302 and they got a ranking’s benefit.

Really?

Yeah.

Congratulations!

They were really excited and happy about that. Surprised of course but they took my advice and did it. Great example of how you take a hypothesis and experiment and then you can apply it in the real world scenario and make lots of cash.

Yeah. I would love to, I’m not sure if they’d agree but I would love that as a case study or as an example as well.

Yeah, I’ll put you in touch with them. I know they spoke with Internet Retailer about some of this stuff and given them some screenshots for reports and things, writeup, so I’m sure they’d be willing to do the same for you.

Oh, I would love to. Actually, Internet Retailer is a conference that I’ve never been to. Are you talking about the conference?

I’m talking about the magazine.

Oh, the magazine.

Yeah. For listeners, if you’re in the retail or ecommerce space, this is a great, great conference. IRCE is the name of it. But that is a completely separate thing from Internet Retailer magazine which is also great. It’s a magazine that you should be subscribing to if you are in that world of ecommerce and online retail. It was the magazine that I’m referring to.

Okay.

Separate companies now actually.

Nice.

That’s a great recap and extension of what we’re talking about in part one about 302 being more favorable for SEO than a 301. Let’s move on to some other linking topics because we want to just cover the gamut, everything that is important for our listeners to know about links, we need to cover in the remainder of this episode. Let’s start here with what if you got hit with a penalty? You’ve got two types of penalties. You’ve got the manual action, the manual penalties and you also have the algorithmic penalties. Penguin is an example of an algorithmic penalty. Let’s talk about what is the procedure for clean up if you got hit with either a manual penalty or algorithmic, for links.

When you get a manual penalty, you should see that in your Google Search Console. All of you guys out there, if you don’t have a Google Search Console, I know there are many of you, get that. Have your web guy set it up, connect it to your brand, connect it to your business, make sure that this little bit of information that you actually get free from Google doesn’t end in a blank box because that means when you monitor that or when you have an email, they will send you an email that says, “Hey, we took manual action because of this or because of that, or because of this.” Sometimes they take drastic manual action and sometimes it’s just a part, it could mean that a small percentage of your traffic is just impacted and you might not even notice or blame something else.

It could be just the page that gets hit with the manual penalty or the whole site.

Exactly. Or a set of links that you got from elsewhere. If you don’t take action, problems could just build up. But the critical information here is, the important part is you get at least a hint from what Google doesn’t like. That’s so much more than the algorithmic penalties. Even if they say, “We don’t like this part of your website.” This is amazing feedback you need to use because for algorithmic penalties, you have nothing. No signal except a drop in traffic, drop in traffic for the whole website or since Penguin, a drop in traffic for some keywords, for some pages, for some folders, or any combination of that. Google made it really, really hard to spot those partial penalties, to spot those drops in traffic that you might not like, that might hurt you but compared to the past where Google Penguin for instance was always at total loss of traffic, you might not even know that you have an algorithmic penalty going on in real time.

Our rankings drop or traffic drop or both and you’re connecting the dots trying to figure out why did this occur.

Exactly. When you’re there, you actually realize it, it could be way too late because you probably don’t think about 0.2% of your traffic drop because you’ve got fluctuations going on and on. But when you see this ongoing trend, it might be way too late and you of course lose some business everyday if it goes down slow. Google never confirmed it, don’t get me wrong, but I think this is part of the plan to make it harder for people like me who gets up every day for 14 years, trying to understand Google, trying to design for Google, makes it harder for SEOs, and analysts like me to understand what’s going on. The same is for businesses. The only way to avoid that unpleasant surprise is to engage, to implement a preventive link audit, preventive link risk management. We call this Link Risk Management as well but basically, it’s about making sure you protect what you have on your backlink assets. If you’ve been in business for a couple of years, then you have earned links. Even if you have not engaged in any kind of manipulative tactics, any kind of SEO, “No we never bought links.” Maybe someone else bought links before you. Some people forget about the past. It could be as long as 15 years ago. Whatever happened back then is still hurting you today. Google doesn’t forget. Especially when you’re coming into a new company, you have no idea about the history. Believe me, whatever you hear is usually different to the data out there and this is not intentional. Maybe the guy before you didn’t know about stuff that was going on in 2003 either. This is just a normal situation. The assessment of the full backing profile, making sure that as many as possible link data sources are combined and then audited to create this foundation that you can trust.

Let me just back up for a second and make sure that our listeners understand this. You want to combine the data sets that you’re getting from tools like Ahrefs, Majestic, Open Site Explorer and the aggregate of all those links is what you’re analyzing to see if you have toxic links and not just one data set, one tool’s data set.

Exactly. This is what you need to do because everyone only has a fraction of the actual web, everyone. It’s also the case with Google, they only have a fraction of the backlink profile of a website. Our goal is to have the maximum possible overlap between what we think Google has and what we can find. You want to combine all these data sources and then, this is very important to understand, you want to make sure that you recrawl it. Google re crawls the web on a regular basis but some products recrawl the web on a regular basis as well, just slower. For very large backlink profiles, this could mean that you get one, two, three, or five-year old data from some other tools. In Link Research Tools, this is the one big difference, we take whatever we can get as a seat set and then recrawl everything. My goal is not to have the biggest index of all the websites out there no matter the quality, my goal is to have the biggest index for your business, for your domains and so make sure that all the data is in there and it’s fresh. It’s one or two days fresh because come on, you don’t want to base your decisions on a five-year old data.

That decision is to disavow and tell Google, “Don’t count links from this site or from this page.” That kind of decision. Also, the decision to outreach to the webmaster and say, “Hey, your link is toxic. It’s pointing to my site. Can you please remove it?”

Yeah, exactly. The quality that we create with our risk assessment is only possible because we actually look at the current picture of the web and that’s some outdated information.

Let me just make sure that our listeners get this too. This idea of link risk management is critically important because it’s not just a ‘set it and forget it’ model. The web and your link profile is dynamic and somebody could come in and hit you with some negative SEO, buy a bunch of spammy links pointing to your site or do something like point a bunch or rel canonicals from a penalized site to your site and hurt you and you’ll never pick up on that unless you’re monitoring this on an ongoing basis.

Exactly.

By the way listeners, there is a great article on this topic of link risk management I wrote for Search Engine Land. It’s really good.

Awesome. A really great article. I remember that one. It’s been a while ago. Maybe an update here would be interesting especially regarding the real time Penguin and what has changed in real time Penguin. Some people walk around and say, “Who’s going to take care of everything for me now?” But I wanted to add something regarding the possible negative SEO threats. Usually, because of the name, negative SEO, people only see the toxic links. They see the links that are bad, they see virus links, they see the malware links, were handful could already hurt you and kill your traffic overnight. But here is something, in my thinking, belongs to this category. A threat, when someone else goes out and uses email software to ask people for link removal, for your website but not link removal of the bad links, link removal of your good links.

Oh, that’s evil.

It is evil and it works. Dave Naylor, a UK SEO that you may know, has done some testing with that and he was shocked to find out that I think the number was 70% or 80% of the emailed people actually complied with that.

Oh, wow.

The interesting thing here is the good links are in good websites that are maintained where someone is watching, where someone is working, and where someone is interested in. A lot of spammy websites are usually running on autopilot or a set it and forget it spam object. This is maybe an explanation why removing the good links actually seems to work better than bad links in this example. Maybe the number was just made up, I never saw the raw data but even if 50% or 20% of your great links get removed by a simple email blast, you should be worried. Certainly, take a look at your backlink profile on a regular basis. Here is another thought, guys. We always talk about Google being your search engines, increasing your organic traffic in search engines is the objective of SEO. But when we talk about links, it’s not just that. Your best links are those links that help you in search engines of course but here’s something that’s even better, the links that you send you qualified traffic, that send you qualified leads, that make you money, they make your money without the intermediate called Google or Bing. If those links get killed, if you lose those links, or if someone actually actively goes after such probably valuable links, you’re in deep trouble because you not only lose the rankings, because Google monitors these traffic links very, very high, but you also lose direct referral basis, direct conversions and you might not even notice. If you don’t do it for Google, if you don’t do it for Bing, do it for your actual referral conversions.

Great, great point. What’s the difference in process if you have a situation where you either know you’re penalized with manual action because you’re notified inside of Google Search Console, I think there’s a critical point you made that every one of our listeners needs to go into Google Search Console and verify themselves as a site owner of their own site so that they can start getting this data because if they’re not doing that, if nobody’s verified the site, then there’s no data collection. When you finally sign up, you’ll see that there’s no historical data from the last 90 days, and that’s a shame. Missed opportunity.

Right.

Now look at this. You’ve either got a manual action or you have an algorithmic penalty and you’re pretty sure it’s because of links or you have that idea that that could be the case. You’re going to take different actions based on whether it’s manual or its algorithmic. Some actions will be the same and some actions will be different. Let’s walk through what those actions are.

When you think about the situation that you have a penalty, of course in the case of a manual action, you already have an indicator. You have something, maybe they talk about a certain directory being penalized which means you should put all your efforts on looking at the backlinks to that directory. With our recent innovations, we have the possibility to calculate the risk, not just on a domain level or page level but on a directory level. If we think about ecommerce websites of course, that means your whole category let’s say on toys or electronics could drop and therefore the effort, if you have a little bit of information, the effort should be put right there. On the other hand, you understand the risk for a category, you understand the risk for a single page. We still need to audit all the links. That doesn’t change between these two different cases. Because to be able to calculate a risk for one link, for one page, for one folder, we still need to look at the full picture because it’s always about distribution of problems or basically the weight of problems. One link doesn’t make or break it. If you have 10% of your links being really bad, that is an issue. If 70% of your backlinks are bad, well that’s even worse. If you don’t have 100%, you can never see 10% or 70%, it’s all a relative approach, a relative view on that because otherwise, you could kill a website with one bad link already, one really, really shady link. If you have a manual penalty and you have an indication of what’s going on, follow that. The process is the same, you start a Link Detox with everything possible. Of course, we have 25 link data sources but you could still connect to other commercial products like Ahrefs, connect those and get those extra data pulled in. This is not so important anymore in the past, we could always improve a little bit but for a couple of months now, we’re really outperforming everyone else in terms of the data that we combine things to the 25 data link sources. But I’m just saying, if you have those accounts, if you have those products, just connect them. We take everything, we duplicate, we clean it up, and we recrawl it. Running the Link Detox, that means you can get estimation for every page, every folder, the whole website. At this point, you really connect to the algorithmic penalty. If you have an algorithmic penalty, you basically know nothing. What you need to do is the same thing, run the full audit, run the full Link Detox and then get an estimation for overall risk for a domain. If that is already in the de-breaths, everything above 5,000 means you have a penalty sitting somewhere, you maybe lose your traffic next morning because Google catches up on bad links and you have an immediate call to action. This can be different. If you have a manual action, the overall website for the whole website could be okay but just the full would be very high risk. In any case, without this base data, without the foundation of data being fresh combined, recrawled and like I said, we re-crawl that live, you have to wait for it, actually our clients wait for that every day and sometimes they think, “Oh, it takes so long.” Here’s an important point, recrawling takes long. Not because our service is slow, it’s because the web is slow. If you have a lot of those links on very slow websites, we need to hit on those websites quite a lot of times and that could delay the overall process. Once you have done that, once after maybe some hours, maybe some days if you have tens of millions of things, you have a data set that you can trust. When you have that, you can actually slice and dice into the data. There are different ways to look at the data. The most popular one is using Link Detox Rules. Link Detox Rules are categories of problems. For example, one rule says if a link comes from a website that is marked as malware, or if a link comes from a website that has a virus, or if it comes from a website that is marked as spreading copyright violations, hack wares or stuff like that or weapons, anything that you would deem dangerous is marked separately. By recrawling the web, this is only the first part of the game. Then we have all the links, all the pages where these links come from, we see if it has a following or no following, we see the anchor text, that’s it. We see that link is dead. That is a lot already. The second big part in recrawling means enriching all these links, source data, all the information where the links are, enriching that with extra metrics, calculating power, trust, link velocity trends and 97 metrics in total that are raw metrics. Some of them might sound even more confusing but if you have heard about link metrics like Patron that was around until 2013, Google Page Link became available it’s just like that. It’s just extra data points for every link. Based on the link’s information, the content, the link text, the type of link if it’s a redirect or not, it it’s an image link or not, all these things go into a system and this system basically interprets with machine learning, if that link is slow, medium, or high risk. Imagine this process multiplied by millions, then you get a risk rating for every single link. Then, you can dive into and start your review. The process here means we have made every possible effort to give you a recommendation, to give you an opinion about how risky or not risky links are. Then diving into that, you can look at them in aggregate, you look at breakdowns, keyword clouds by risk or you could look through all of these things, every single link, one by one, using a feature called Link Detox Screener. Link Detox Screener is a browser in the browser where you can simply go through all of those links. You could show me all those links that have a certain risk level above 1,000 and have the keyword, whatever keyword that you have a problem with and just look through those links one by one. Very fast, very convenient and there you see things like the HTML source code highlighted, everything on the page. You don’t have to mess around and look around the HTML source, everything is in place. You see the HTML source because sometimes, believe it or not, people mark their links as paid links. If you have a CSS task called paid link, you know exactly that this is a problem. Google has these titles as well. But sometimes you’ll also see that it’s a link that came from aggregation of data. It’s super helpful because you have all the information at your fingertips. You see the website, how it looks like, the design. You see the anchor text, you see all the metrics like Detox Screener. You can go one by one. Doing that, you can rate the links. This works one by one or in bulk for hundreds of thousands. When you see links, when you do not agree with the system because it’s a system, it’s a software, it’s machine learning, when you agree with it or when you disagree with it, both, you can tell the system. In fact, when you see a link that has a very high risk and you say that this is actually a great link, you can rate it a thumbs up. It’s basically telling the system, “Hey dude, this is great stuff, why is it bad?” Or the other way around, you see a link that is actually not detected as being toxic but you’re 100% sure that it is, you can train the system. You can train the system for you, for your domain, for your company, for your business. Then reprocessing means the knowledge that you just gave the system is applied to all the other links.

You can also apply your own knowledge to the anchor text and say that these are money keyword type anchor text, these are band anchor text and they’re not as potentially egregious to Google as the money keyword based anchor text and then you have to process that and determine how high your risk is. This tool set is called Link Detox and this is a must for people to run on a fairly regular basis to make sure that they don’t have toxic links because otherwise, they are flying blind. They’re only going to know that something’s wrong if their traffic drops significantly.

I think the keyword classification you mentioned, the training of the system regarding your brand name, brand name variations but also other variations or synonyms for your brand is very, very important. I don’t know any other software that actually has the keyword intelligence in there but because historically, manipulation was always done on money keywords. Those have a separate risk, they are different, usually higher risks. On the other hand, it’s important to understand that just because you have commercial keywords in your anchor text, doesn’t make that link risky or bad. It’s just because a lot of risky and toxic links have money keywords, a lot of people thought, “Oh no. Let’s not do commercial anchor text anymore.” You may read or hear it on these conferences, “No, no, commercial anchor text.” That’s wrong. You can’t put them all into one basket. Just because you had a lot of bad links having commercial anchor text doesn’t make commercial anchor text bad by itself.

Other variations or synonyms for your brand is very, very important. Share on XI think what people failed to realize is that links have natural diversity in them, diversity in the top-level domains that are linking to you, diversity in the anchor text that people are using, diversity in the power and trust scores of those linking pages and of the linking sites, diversity everywhere, themes of the sites linking to you, etc. If you’ve engineered stuff and manipulated, then there’s lack of diversity. It looks very engineered to Google. It sticks out like a sore thumb to their algorithms. We want diversity and we want to scan for the lack of diversity that’s why using a toolset like Link Research Tools is so valuable to see that diversity either exists or doesn’t in your link profile. A quick question here, do you think that the risk of manual actions is higher now because of real time Penguin?

No.

Okay.

Actually, manual actions were a very drastic method. They sent out hundreds of thousands of manual actions overnight in April or March 2012. It was something that caused a shock in the market and caused a lot of people to wake up to the fact that Google finally took action against obvious manipulation. However, Google changed their mind, they changed their strategy and they would rather not be the harsh penalizers. They got a lot of bad press for killing business overnight. They got a lot of bad press for enforcing the quality standards. I totally get it. Losing everything overnight causes existences to vanish overnight. Companies went bankrupt, it’s all bad press for Google. Combine that with the fact that people like me reversed engineered what happened, how it happened, why it happened, because we had a huge data set. 1,000 dropped websites here,1,000 dropped websites there overnight created one full data set to analyze. Google is not interested in having them being reversed engineered. They don’t like that. I won’t like it either if I would be them. But this is one of the advantages that I have that money cannot buy. Nobody can go back in time to 2012 to reverse engineer what they did, why they did it. Nobody has the data that we have, because we gave Link Detox away for free actually, for eight or nine months, so you can collect data from 10,000 penalties back then, nobody else did that. This all went into the machine learning into the training system, and it still does of course when we get new data.

That’s awesome. Let’s go through the rest of the clean up process. If you have a manual action or an algorithmic penalty, you need to contact webmasters, beg them to remove the link. You’re going to treat them differently depending on the type of situation, if it’s been flagged as malware or viruses infected their site versus if they are just a spammy porn site or whatever. Then if they comply with link removal, that’s great but a lot of times, most of the time, they won’t comply and you have to to disavow. Let’s walk through quickly that process of the outreach and the disavow, and you can mention how you’re integrated with Pitchbox and how that all works for you.

Okay. Number one, doing the outreach for link removal is something that is a tedious process but that is also successful and not successful depending on how well targeted you are. Link Research Tools integrates with Pitchbox, which is the only solution that I would deem appropriate for that undertaking and that’s why we integrated with them. You don’t want to compare Pitchbox to blog emails. I think this is the magic here, they have a lot of flexibility in templating an automation and following up or not following up. You don’t want to keep selling stuff if your reply comes back, for example. What Link Research Tools does is it provides all the data to help you segment down. For example, if you have 1,000 links from 1,000 different malware-infected domains, don’t even think about sending them an email that is the standard email that everyone sends out, “Hey, Google penalized me, I need to remove the link.” They don’t care about you, they don’t care about themselves. When you know that they are malware-infected, you can actually send them an email informing them, providing the value that you found out that their website has a problem. That could lead not only to a high response rate but to immediate conversations because on the one hand, either they just take down the link–you can even threaten to sue of them if they keep the link up, this is what you could do in the third email then if nobody replies but usually, they are very interested in fixing their website anyways. Doing that is something that leads sometimes also to complete shutdown of a website because the website has been around but it’s not part of the creative business anymore. It could be an abandoned website of a brand that they stopped using. Hopefully, they don’t redirect those malware links to their own website. That is actually a potential risk to happen. Sadly, this is very different from emailing the random porn website that just has a link to you because someone bought a link there for you. If you have all the information in Link Research Tools, you can push one button and send all of this to Pitchbox. You could, depending on the volume, automate different campaigns, you can even, when you think about multinationals, of course segment by language. If you have all kinds of bad links in Turkish language, I don’t speak Turkish and I don’t know what to write to a Turkish webmaster. You want to have different people in different countries working for that or at least get someone that is more or less a language pro to help you translate that because Pitchbox supports automated outreach. You write those templates once. You write the first email, you write the follow up email, you write the third email. The first follow up, email number two could be still nice, email number three could be quite harsh.

Not very friendly.

Not very friendly. When you bring in things like copyright or trademark violations, I’ve got links removed that were websites that were hosted in Belarus. I’m not even sure if they have human rights, they certainly don’t have trademark rights. Reducing the risk of getting into trouble makes people take action and remove those links because who’s an expert in trademark law anyways? That’s just one hack that I would recommend. If you have a company, if you have a trademark, if you have those USPTO trademark numbers, put them all in there. Be as specific as possible if you really want to threaten them. But that’s something that you can do if they don’t comply in the third email.

Got it. You’ve got the outreach happening, you’re using Pitchbox to do the outreach and Pitchbox also does outreach for acquiring links. If you want to do some influence or outreach campaigns, get some really great link baits, content marketing campaigns that you created, there’s lots of great positive uses for Pitchbox. It’s not just a clean up process for toxic links.

Right.

Let’s say that you’ve now done your outreach, you’ve used Pitchbox, you’ve got some percentage, maybe it’s single digit percentage of the people to comply and remove those links, the vast majority that do not remove the links, now what? Disavow, right?

Right, exactly. When you’re in the process to make a decision if you want to get rid of a link or not, the link removal is the first step. You always, always, want to send those link removal emails. I’ll get to that in a moment. But of course, you can at the same time create the disavow file which is a file that helps you tell Google which links you don’t like pointing to you. You can distance yourself, you can disavow yourself from those links and tell Google, “Those links have nothing to do with me. Do not count them anymore.” Uploading the file doesn’t do much because Google needs to recrawl those links for it to work. We’ve got a solution called Link Detox Boost that helps speed up the process of crawling, that forces Google basically to crawl those links so the disavow file takes longer. Otherwise, it might take up to nine months. That’s the longest time we filed which is crazy but if you think about it, if you have really, really nasty links, really, really bad, old, outdated websites, Google doesn’t fancy crawling crappy websites. This is why we need to give them a push, especially bad websites. The disavow file will then deactivate the power from those links. The problem is if the links are on this one page, they might spread to other pages. There are scrapers crawling around the web, copying content and putting that content somewhere else. It happens everyday that bad links inside that content get copied as well. If you don’t remove that link, you basically might have a problem to take care of in the next month.

It spreads.

Yeah, it spreads. Sometimes it spreads forwards. It’s crazy. Depending on the content, depending on the topic, if you’re in an industry, let’s say money industry, like credit cards, loans, any kind of gambling, all of these things are usually heavily scraped because that’s what the black hat industry does. That gets you new links even without you doing something about it and that’s something that no one talks about. Google doesn’t talk about it. We have to figure it out ourselves. I heard it somewhere. The truth is they don’t because they don’t have the resources. Search engine is a product of Google, it’s a product of Alphabet and with all the power, with all the talent combined, if you take the organic search engine that is the product that is being used to sell ads on compared to the whole system of Google around it, it’s really small. The focus is not to make the lives of webmasters easier, the focus is to sell ads. We always, always have to remember that we, as webmasters, get a special treatment. We have the Webmaster Relations, John Mueller and Gary talking to us at these conference telling us a little bit of information and some tweets, sometimes. But it’s not Google’s objective to make a webmaster’s life easier. They give us very good webmaster guidelines to explain how to make websites crawlable but if you have trouble with your links, if you have a penalty, you’re basically by your own. Google doesn’t even respond to questions most of the time. They do a good job on some special cases but this it’s not for everyone.

Let’s say that you’ve created your disavow list of all these URLs that you want Google to not count anymore. After Google recrawls that URL, then it will kick that in and take away any kind of negative impact of links on that URL that points to your page. You could disavow the entire domain or you could disavow just a page or URL. There’s some risks in disavowing the entire domain. I don’t want it to spread like a fungus to other pages on the site so I’m going to just site-wide disavow of the entire domain. If you’re not careful, you’ll end up disavowing legitimate links, pages like TechCrunch or whatever. Let’s walk through how that might happen.

I think in 2013, Matt Cutts, the former head of Web Spam said I think on stage or on a tweet, I don’t remember, “If you’re in doubt, then disavow the whole domain.” Maybe that even made it into the Webmaster Guidelines, I need to check. If you’re in doubt about a link to you, disavow the whole domain. It’s basically like that. I see domains like TechCrunch, YouTube, Facebook, Instagram, you name it, really large web properties being disavowed on a whole domain level just because there was one or two common spam links. Honestly, that’s someone creative.

That’s kind of like saying you have a splinter on your finger, if you’re unable to get that splinter out, you may want to just cut your whole arm off.

Exactly. That’s what it is and that’s what people do. I’m sorry to confirm that. I see it everyday, it’s shocking. It’s self-sabotage. This is just one part of the human error. I don’t want to call everyone stupid here because it happened to me, we had disavowed the whole domain Adobe.com. We have disavowed the whole domain of SEMrush.

Like the log comment spam that somebody posted to one of those legitimate sites.

It wasn’t even spam. I mean, it was me. Sometimes I would do it and I have my keyword Link Research Tools in there. The guy who did it didn’t know very well. I used cheap labor for that. This is where you want to be careful who you give power to disavow your links. This is why we have the certification program now. This is why we have a special mode called disavow file audit where you can actually take away if you come in as a consultant, if you come in as an agency to a business that already has a disavow file, what are you going to do? Some of those links might be disavowed without a reason, or might be disavowed wrongly because they are now powerful or they were always powerful like Adobe.com. You need a quick way to clean that up, to make sure that you have a good foundation. That’s a different case. If you start from scratch, you don’t have the problem but most agencies have this problem now. In 2017, we have five years of Google Penguin, five years of disavowing, there are some bad disavow files out there.

Yeah for sure. Let’s go into lightning round because we still have to get a bunch of stuff in but we don’t have a lot of time left.

Okay, shoot.

Expired domains. How does that work? Do you get the page rank reset if you buy an expired domain? What if you buy a website instead of just the domain, maybe an ongoing concern, what happens with the pagerank when you acquire one of these domains or websites?

If you go com.org usually, it gets reset really, really quickly. You want to make sure, if you want to preserve the page, you need to basically fake the address, you need to clone the content, you have to create the imagination for Google that it’s still the same website, which involves basically a deadly fit so don’t do this at home. That’s what it is. Don’t try to hide behind some privacy features, domain privacies, just the fake. But the moment you move it just to your own web server, you have only created a signal where it’s hosted on a different website. Google is really, really good with com.org and many PBNs, many expired domain networks are made with country level domains, ccTLDs. It might be not German but it might be Hungarian domains, Bulgarian domains, Peruvian domain, very large domains sometimes expire without the owners taking care of it. Those are more expensive domains but they’re also in systems that Google doesn’t have such good access to. Getting bad domains there means you have a little bit of better chance of keeping it but the truth is, Google is going after all of them. The moment you raise some eyebrows or you build a network to sell it to others, you’re an immediate target for Google. All these so called private block that works that you read about in forums or on Facebook are not private at all because the moment someone offers them to you, I can guarantee you, someone from Google will come around. Maybe not today but they will come.

Let’s say you acquired an ongoing business and you don’t want the pagerank reset to zero, what are some things that you do to decrease the likelihood that’s going to happen assuming that the content is already there, you don’t have to use a tool like work to go into the archive.org, wayback machine and pull an old version of the site and reinstate it but instead, you’ve got the content, you’ve got the domain, maybe even some staff came with it. It’s a legitimate acquisition but still, Google can make a mistake and decide that this is one of those tricky spammers trying to play games so we’re going to reset it to zero. What do you do to help your chances that that doesn’t happen?

Leave the office as is. Actually, I have domains that still have my old office address and I’ll never change that because who’s sending you a snail mail anyways by looking at the registration? I know there are some rules and plays that you should always keep it up to date but you don’t want to update that if it’s a legit address and it has a legit phone number. Maybe change it slow, if you really need to change it. Big companies always have different rules than smaller companies. But then change it slowly. Change as minimum as possible especially when you move the IP address, the host, the web host is a big change anyways. For the time being, make sure that you only change one thing and then change another thing, and then change another thing, week by week.

Very slowly.

Very slowly.

Because what could happen is you change your nameservers, your registrant name, your admin contact, your mailing address, technical contact, all that information all at once can make a big change to the content of the site and Google says, “Oh yeah, let’s start that over with zero.”

That’s a new owner. Google tries to answer the question, “Does it have a new owner?” They said, if you are the new owner, you lose everything. They say they try to take away the incentive of buying businesses for SEO purpose because that has been going on and people do that.

Let’s move on to the next question. We’re in lightning round, remember?

Oh, okay.

.gov, .edu links, a lot of people still think that they’re special credit for the fact that it’s a .gov or .edu rather than the fact that it’s just really pristine link neighborhood that .edu or .gov is in. Let’s kind of bust some myths here about govs and edu links.

It really depends on where it is on the website, it depends on the actual edu. There were some fake edus out there. I think america.edu, I don’t know if that still exists or someone just bought an edu company, the commercial university that was bankrupt and use that domain and exploited that.

Funny.

That’s a reason why it went bankrupt. It didn’t have a good backlink profile. They were sold for $50 a link or something like that and of course they didn’t help and they penalized all kinds of people because everyone there was a link buyer. You could not only see it, you knew it. When we talk about link from the governmental agencies, from the universities, the best possible link that you can get is right from the inner corpus of the organization. In the past, ten years ago, there were also pages around on subdomains, on subfolders that helped big time because the trust was somehow passed over from the main domain to the subdomain. While Google always show subdomains as a separate entity, it seems like the subdomains inherited trust from the main domain as well. Today, we can measure all of that with power and trust, a lot better. Back then, we basically had nothing. Today, the extension is not a criteria at all. From my point of view, with the technology we have, with the data that we have, measuring the domain and page works by looking at the power, the strength of the link, how many links go there and even more important, how much trust does that domain and the page have. There are certainly pages that have a trust of zero because they’re not even links pages. That has been around as well. Links from student home pages that didn’t have a single link to it that were not even indexed.

It’s a great differentiation or distinction here that power and trust are very different. Power is like the importance of the page or the site and trust is how trustworthy that page or site is and if it’s very far away from trusted link sources, trusted sites like Harvard University, National Science Foundation, CERN in Switzerland, that sort of stuff, then it doesn’t look trusted and those links could not be very good. Do you have a browser toolbar that you want to plug that gives you power and trust like LRT Power*Trust scores?

Yeah. I actually got three of them.

Okay, let’s hear about them.

Three extensions for Chrome and Firefox. Number one is a very simple display where you could see the power and the trust for the domain in the page. If you have the page extension back in the days, this is the exact replacement for that except that you get up to date best data from Link Research Tools for free. We give away the data for free because this is something that is missing in the market anyways. We spoke about the AP and AN. This is the one answer. The second thing is we’ve got a Link Research SEO Toolbar and that is an extension for Chrome and Firefox that overlays data for power, for trust, for link velocity, number of ranking keywords, impact and more over your search results. When you search in Google, you will get an immediate understanding of how powerful or trustworthy those results are. Not only trustworthy for you as a searcher, but also for you as a link builder, for you to acquire links from that page. For example, after competitive research, quite often, people find out they have more power than trust. They are missing trusted links. Sorting and filtering through those would work in a very okay way and in a hobbyist way with a free extension here as well. Of course we’ve got the same pattern, the same tools, the same kind of search results for dozens of keywords in link research paid suite but this free browser extension helps you a lot already. If you’re just starting out, that is the best way to do it. Number three, Link Redirect Trace. It’s actually the most popular tool that is actually an on page tool that helps you to understand how redirects go hop by hop or step by step. When you click on a page that redirects you somewhere, as a user, usually you just see the target page but quite often, you have a chain of redirects, 1, 2, 3, maybe 10 hops in between and having data for those redirects in between seeing which cookies are dropped, which of those pages are actually blocked, which of them are powerful or trustworthy etc., is possible on this diagnosis tool. A lot of SEOs all over the globe love it. We’ve got almost 14,000 users of this Link Redirect Trace extension and we get raving ratings everyday about this.

You don’t have to be a customer of Link Research Tools to use that extension?

Exactly.

That’s great.

Exactly.

One more quick question here, maybe two more. You mentioned link velocity trends being something to track and measure. Can you explain that really briefly, why is link velocity an important thing to be measuring?

The change of your backlink profile has always been a criteria for Google to rank you. Just because a domain has 10,000 links from 10 years ago that hasn’t earned a single link for the last five years is a good example. That’s a very, very negative. It’s -100%. It doesn’t grow any new links at all. Just because it has some links from ten years ago but doesn’t grow new links makes it a weak domain. Just because the links are there, it’s not the same thing as if you would earn 100 new links every week or 100 new links every month. The speed of your link growth or decline matters. This is what we refer to as link velocity and of course a trend is then just a percentage. Everything above zero is great. A lot of popular start-up businesses have link velocities of 50, 100 or 200 for the overall domain. What that means is if you have a link from such a popular domain that gets so much more links is a much more interesting target for you to get a link on because you grow with that link rev. Imagine, you would get a link on a website that has 100,000 own links and just because the growth of links for that website is so great, you might have a link from a website that has a million backlinks very soon, based on that. You want to play with these popular guys. You want to be with the famous people, the famous websites and not with the dead meat or those old outdated websites. That’s actually another problem for expired domains.

So hang out with the cool kids.

Exactly.

Listeners, there’s a great article going through some of the most important of the tools inside of the Link Research Tools set that I wrote for Search Engine Land and I’ll drop a link in the shownotes for that as well. It provides a whole lot of use cases for each tool like Link Alerts, Backlink Profiler, Competitive Landscape Analyzer, Link Detox, etc. It’s all in there with short descriptions of when and why you would use each one. How you would find hubs for example, etc. Actually, why don’t you explain really, really quick and then we’ll close out the episode. Why should we look for hubs?

Okay, looking for hubs. When I’m in a room with Stephan Spencer, when we hang out together, when we’re here on a podcast, your authority reflects on me. I’m just hanging out with the cool kids. The same is true for links. When you have links to other people that are successful, a link to, a link from, then you are in good neighborhood. Hanging out with the cool kids is the best thing here. The place is called a hub where all of these other good people are linked from. It works in the other opposite direction as well. If you’re in a place where a lot of bad websites are linked to, you’re in trouble too, so this is also what we analyze.

Right. Let’s say you’re looking at your competitors and there are three that are venture funded and really growing fast and you’re a small start-up and you see that all three of those huge competitors are getting links from x, y, and z websites but you’re not yet, you’ll use a tool available from Link Research Tools that identifies those hubs and then you outreach to the webmasters of those sites asking for a link in a way that it actually works instead of, “Hey, I noticed that you linked to my competitors and you didn’t link to me.” That sort of nonsense is going to get deleted. First step is to identify the hub.

Exactly. This is another use case for Pitchbox. Pitchbox integrates with all of these tools that we have. What you mentioned was the common Backlink tool that’s how we call it in our product and CBLT. Nothing to do with hamburgers. There are prospects, those websites that you want to contact, you can send to Pitchbox as well, another example for different outreach campaign. Of course, you will talk differently. Like you just said, you will talk about how they forgot to mention you. “You talked about these guys, these guys and did you know we have this and this. You may want to consider including us there.” They will get back to that if you struck their ego a little bit. This and many other methods, we actually go through in our trainings. Maybe if I can plug my new LRT Associates Training, it’s a full online training that we sell for $297 but it’s free until end of August. This might be very interesting for your viewers or listeners.

That’s great. How many hours of training is that?

It’s roughly 40 videos, 4 or 5 hours of videos.

That’s awesome.

I just shot them in May, 2017. It’s really fresh and there’s more to come. I constantly update that as well. For your show, for you Stephan maybe we could do something special. There’s basically a follow up training on that. That’s two and a half days that we do in Vienna in October but also in Las Vegas in November, beginning of November that we offer for $2,500. But if one of your guys signs up for the free training and does the exam there and really excels, then we can actually give away one of those paid trainings, worth $2,500. It’s a good reason to travel anyways. If you want to come to my training there, that’s a great way to win it.

That’s amazing. Wow, that’s very generous of you. Everybody listening who wants to get better at link building, go to the LRT Associates Training, which is as you hear, very comprehensive and fresh with 40 videos shot in May. Then you take the exam and you have a shot at getting a $2,500 value of the multi-day training in Vegas in November for free potentially. You guys should all take up Christoph on that amazing offer. That’s great. Thank you.

Travel costs not included of course. I’m not paying for your flight.

Awesome. This has been amazing. It’s like drinking from a fire hose yet again for part two. Thank you so much, Christoph. Thank you, listeners. This is quite a long episode and you guys stuck it out to the end. Kudos to you for doing that. Now it’s time to take action and apply some of that stuff. In addition to the show notes, there’s also a checklist of actions to take based on what we discussed in the episode. Do download that checklist and start working through it. That’s where the rubber meets the road. It’s when you take action. All interesting information but if you want ROI from your time spent listening to this episode, go through that checklist and start doing the stuff in there. Thank you and this is Stephan Spencer, signing off. We’ll catch you on the next episode of Marketing Speak.

Thank you. Bye bye.

Important Links

Connect with Christoph Cemper

Apps/Tools

Articles

Company/Organization

People

Previous Marketing Speak Episode

Your Checklist of Actions to Take

- Use 302 instead of 301 redirects to have long-term ranking benefits on my keyword-filled URLs.

- Sign up for Google Search Console and verify my site so that I get notified whenever Google sends me a manual penalty.

- Use aggregated data from several different tools like Ahrefs, Majestic, Open Site Explorer to analyze my backlinks for a preventive link audit.

- Implement a regular link risk audit to make sure that I have quality links with nothing to disavow.

- Use LinkResearchTools as extensions for Chrome or Firefox to analyze the power and trust of domains I am searching.

- Audit all of my links and to calculate risk for my sites, pages, and even categories.

- For manual and algorithmic penalties, first get a full link audit using a combination of tools. Then use Link Detox to clean it up and recrawl before addressing the bad link issues.

- Learn about keyword intelligence and the risk factors with money keywords as opposed to brand keywords. Link Detox can also help with this process.

- When purchasing new sites slowly change one thing at a time so that I don’t get flagged by Google as a new owner and lose rankings.

- Ask webmasters to remove bad links. Pitchbox is a great tool for this. If this doesn’t work, disavow the links.

About the Host

STEPHAN SPENCER

Since coming into his own power and having a life-changing spiritual awakening, Stephan is on a mission. He is devoted to curiosity, reason, wonder, and most importantly, a connection with God and the unseen world. He has one agenda: revealing light in everything he does. A self-proclaimed geek who went on to pioneer the world of SEO and make a name for himself in the top echelons of marketing circles, Stephan’s journey has taken him from one of career ambition to soul searching and spiritual awakening.

Stephan has created and sold businesses, gone on spiritual quests, and explored the world with Tony Robbins as a part of Tony’s “Platinum Partnership.” He went through a radical personal transformation – from an introverted outlier to a leader in business and personal development.

About the Guest

CHRISTOPH CEMPER

Christoph C. Cemper started working in online marketing in 2003 providing SEO consulting and link building services. Out of the need for reliable and accurate SEO software, he developed the first internal tools in 2006. This was the basis for the full product LinkResearchTools (LRT) launched to the public in 2009 as SaaS product with four tools. Thanks to ongoing development, LinkResearchTools now provides 21 tools with ever growing power, and functionality adapted to market requirements and Google changes..

When the famous Google Penguin update changed the rules of SEO in 2012, Christoph launched Link Detox, a software built for finding links that pose a risk in a website’s backlink profile. Christoph has been talking and writing about link risk management since early 2011 and introduced the technology and formal process for ongoing link audits in 2012 as well as pro-active removal and “disavow” of bad links.

In 2015, Christoph launched Impactana, a unique “Content Marketing Intelligence” technology that helps marketing professionals find content ideas that make an impact.

Leave a Reply