It took about 100 years for the telephone and 50 years for the television to get one billion users. It took Facebook and smartphones for eight years. This accelerated rate of adoption is similar to the rate of technological innovation, which gets faster and faster as time goes on. In fact, in as little as 30 years, we may reach the point of singularity, when computers and technology are so much smarter than us that all of the known laws of evolution break down.

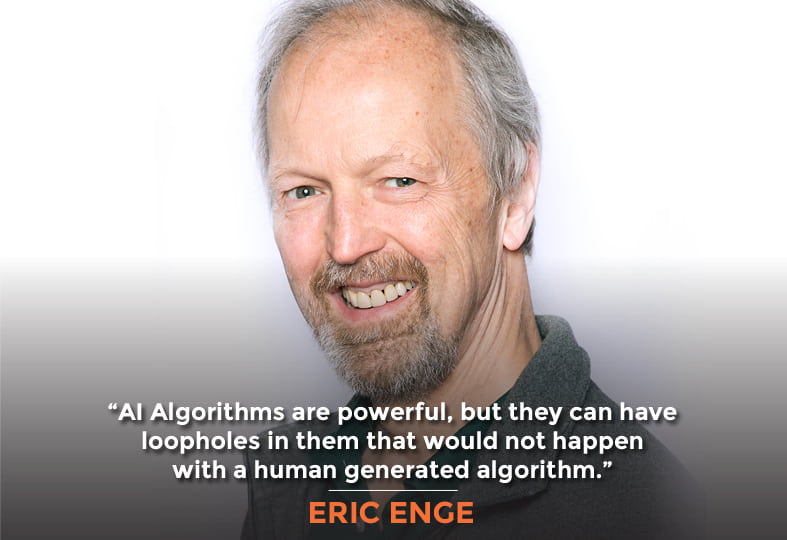

Whether you find this fascinating or frightening, you’ll love this conversation with Eric Enge. Eric is my co-author on The Art of SEO, as well as the US Search Awards 2016 Search Personality of the Year, etc. While we’ll dig into the possibilities for the future, we’ll also explore what it means for us today, and how we can stay on top of SEO and organic Google rankings.

In this Episode

- [01:04] – Stephan and Eric talk about how long they’ve known each other, and how they first met.

- [03:26] – What comes to Rand’s mind as something that isn’t common knowledge, or about which there’s a lot of misinformation?

- [07:54] – Stephan and Eric take a moment to differentiate machine learning versus AI for listeners who may not be familiar with the terms.

- [11:27] – AI doesn’t necessarily mean “artificial intelligence,” Stephan explains.

- [14:16] – The rate of adoption is increasing in the same way as the rate of the available technology, we learn.

- [16:04] – Does Eric think that SEO will be a very different ballgame in five years?

- [18:51] – Eric explains that Hummingbird was Google’s first foray into understanding natural language, and explains RankBrain and what it does.

- [25:35] – We hear about Eric’s studies to analyze the before-and-after results of RankBrain.

- [29:49] – Stephan and Eric talk about why it’s difficult for Google to deal with queries that it’s never seen before.

- [31:06] – Eric talks about some other studies he’s done analyzing various aspects of Google.

- [35:21] – How does this study differ from other studies on the same subject of featured snippets?

- [40:15] – We hear about why Google invests so much energy into featured snippets.

- [42:36] – Eric discusses his in-depth Digital Personal Assistant study and reveals that Google was the smartest.

- [46:27] – Voice search is part of voice, Eric explains, but there are also voice commands that aren’t searches.

- [50:30] – Eric talks about a set of steps that you can go through to get featured snippets.

- [52:29] – We switch topics completely, with Eric explaining how he went from multiple lead-gen businesses to running an agency.

- [55:16] – Is Eric able to work on the sites he’s been talking about, or is he too busy speaking at conferences and writing?

- [57:57] – Eric talks about how he balances his time at work, and how his management team functions in that process.

- [59:56] – Does Eric have any assessments that he uses when he’s on-boarding or considering people?

- [65:50] – Eric lists the ways that listeners can learn more or get in touch with him.

Transcript

If you care all about SEO and organic Google rankings then this episode number 143 is a must listen to episode. Our guest today is Eric Enge. He was not only my co-author on the Art of SEO—now on its 3rd edition and published by O’Reilly—he was also named as the US Search Awards 2016 US Search Personality of the Year and was also Landys 2016 Search Marketer of the Year. Besides being a co-author with me, Rand Fishkin, and Jessie Stricchiola of the Art of SEO, Eric keynotes many industry conferences and is the SEO of Stone Temple Consulting which is a 70% digital marketing agency based in Massachusetts. Eric, welcome to the show.

Stephan, thanks so much for having me. I’m looking forward to it.

Me too. We’ve known each other for years and years. Gosh, when was it that we first got to know each other?

Mid to early 2000s or something like that. I don’t know—a long time.

Yeah. There was that pivotal moment where I came up to you in the speaker room at SCS Chicago, talked to you into becoming an author on The Art of SEO.

I know. I remember it well because it was actually 2008, because the book was published in 2009. You talked to me about it and I had this sort of “Oh, crap!” moment going through my mind. I had to call my wife, I had to call the guy who was running the business, Stone Temple together with me at that time. “Okay, this is going to be a boatload of work. Do I want to do this?” I managed to persuade both of them that it was a good idea.

Indeed it was.

Yes, it was.

We’ve changed lots of people’s minds, lives, and businesses through the book in its various incarnations now on its 3rd edition. We keep getting asked, “When’s the 4th edition coming out?” because it was late 2015 and it is about time that another one comes out but alas, we haven’t even started the 4th edition yet.

Maybe we’ll do that soon but you’re right—about it changing lives. I can’t tell you how many people come up to me and tell me that it required reading for everybody in their digital marketing department or that it set their path for how they viewed SEO and took them down a very different way of thinking. It was very cool when you have that level of impact.

Yeah, for sure. Let’s talk about some things that we have shared either through the book or through our various speeches and blog post, and so forth. Some things that are not necessarily common knowledge. Maybe there’s a lot of disinformation or misinformation about some of these things. What would come to mind when I describe something like that—something that is not common knowledge or common sense, perhaps?

There’s so much of it in the world of SEO that the first thing to say is that, “Just because you read it on the internet doesn’t mean it’s true.” Choosing those words to be a little bit mirthful about it but in the SEO space because it’s not regulated and because there are no clearly published guidelines as to how it really should work. Google has their own guideline but they’re not really telling you how to do it. There’s the opportunity for a lot of misinformation. One of the popular ones is that machine learning has taken overall of search and it’s changing how SEO works. Machine learning is having a huge impact, no doubt. But the people who think that it’s dramatically changing what you need to do within SEO, don’t realize that the existence of a new tool set to a company like Google, didn’t at the same time, change the objectives of a company like Google. The objectives remain the same. They have something like 35%-40% of all digital marketing advertising revenue. They’re the number one player–between them and Facebook–it’s over 50% share. They want to maintain that share. To do that, they’ve got a lot that they have to do to keep the users that come to their site happy with their service. User engagement, user satisfaction, they’re really big deals. This is a lot of what they focused their machine learning energy on. As part of that, what you see here in 2018 so far, they made really big changes in how they’re interpreting users’ intent for a large number of search queries. There’s been a fair amount of shake-up in the search results. You see some big differences in how they’re responding to a lot of different queries and I happen to believe that’s really machine learning driven.

Would you say that machine learning or AI eventually will really be a huge component of rankings algorithms over Google?

I believe it will. To some degree, I think we can say that it already is a large component, no doubt. They actually still continue to have some human-generated algorithms which they aren’t likely to change soon but on the other hand, the machine learning algorithms are getting a growing foot hole. Let me explain though the reason why you don’t just simply throw out every human-generated algorithm and replace it with a machine-learning or AI generated algorithm. When you have something created by a machine, what you don’t have is any insight into what’s inside that algorithm and it makes it harder to test, it makes it harder to have intuition of where the edge cases are and where the thing can break down. You don’t really have this much of a feeling for what it’s doing. While the algorithms can be more powerful, they can have hard-to-discover and potentially big and important holes in them, that would not happen the same way in a human generated algorithm. There’s actually two camps out there in the machine learning community; those who believed that, “Yeah, we’re going to drive everything down the AI route,” and those who think, “Well, some balance between human-generated and the machine-generated makes sense.” One other aspect that I had mentioned too, which also plays in a very big way into this equation, these algorithms, they’re very dependent on a very rich set of data. For very long tail queries or even moderately long tail queries, there isn’t a very rich set of data available. To give you a different example for ranking websites written in Arabic for Saudi Arabia, you have a very small universe of sites that fit those classifications. There isn’t enough data for machine learning algorithm to work in these kinds of scenarios. You have to rely on human-generated algorithms in those. I don’t know how that goes away. You’re always going to have these sparse data kind of situations.

Well, let’s differentiate, first of all for our listeners, machine learning versus AI. Artificial Intelligence is going to outsmart us human race in a matter of decades. During our lifetimes, we will have computers thousands of times smarter than us which is pretty scary but also, an incredible opportunity potentially, as well. AI, in that kind of scenario, will be so much better in figuring out if a page is relevant, authoritative if it’s legitimate. AI, will be able to figure out pretty much anything, AI will be able to write a better symphony than Beethoven, it’ll be able to paint a picture better than Van Gogh. It will also be able to provide better search results. That’s coming eventually. Let’s differentiate machine learning from AI. Why don’t you take a stab at that one?

Sure. You’re right about the AI piece. You went right to how do we replicate human intelligence or rather create an intelligence with similar characteristics to human intelligence kind of thing. That’s the right general direction to think about when you’re thinking about AI. Whereas machine-learning is much more specific and tactical kind of thing. The best way to talk about that is for me is to illustrate with a simple example but it will capture the idea. Imagine you want to develop an algorithm for predicting the price of a house. You had the table of data, one of which showed the sides of the house and the other showed the price of the house. You have these two columns of numbers. You could fairly, easily, even by hand, generate an algorithm for that because you only have these two variables. The problem is that house pricing isn’t that simple—the lot size matters, how many bathrooms, how many bedrooms matters, the size and the styling of the kitchen matters. All those, by themselves, make the algorithm more complex. Then you throw on top of that, the geography of where the person is. You get beyond a place where human being can generate an algorithm which will properly model house pricing with all those variables. It’s too much data. But for machine-learning algorithms, you can feed it those tables of data. What it will do is it will build a very complex polynomial algorithm which fits it. The way it does that is it starts with a test algorithm. It calculates the level of error based on what the test algorithm predicts the house price will be based on input perimeter and then uses that error to improve its guess in a number of successive approximations over time. This is an example of one of the types of machine-learning. There’s a few other variants of algorithms. They all had a sort of a similar concept, that it’s very mechanical sort of thing going on that creates these algorithms. Technically speaking, machine-learnings are subset of AI but in some level, the way you described it a moment ago, machine-learning is pretty niche subset.

I see AI as an evolution beyond machine-learning where in fact, AI doesn’t necessarily mean Artificial Intelligence. How are we be able to differentiate sentient life as being natural or artificial when maybe a silicon substrate will be infinitely more intelligent than us? What’s the differentiator here? AI within the AI community is often referred to–when it’s fully evolved–as Autonomous Intelligence.

Yes.

Imagine if you could fit in to the next 20 years and they say that the singularity is coming in probably around 2045, 2055 maybe, as late as 2065 but certainly not any later than that, we will get the singularity which is when computers, technology, will be so much smarter than us that all the known laws of evolution break down. There are different kinds of singularities. There’s a quantum singularity where it’s a black hole, it’s where all the known laws of physics break down inside of black hole, and evolutionary singularities what we’re headed towards very fast. There’s really not a lot of checks and balances in place among the AI community in making sure that we build a friendly AI. Maybe there are some in place, but I don’t think it’s insufficient. Let’s say we go back in time, 100 years and this is according to Ray Kurzweil, “Look at the last 100 years of technology evolution.” Imagine back 100 years ago, looking forward, you could see that there’s a crowded sidewalk, people seem to be talking to themselves, there’s a little earbud in their ear, or maybe a Bluetooth thingy—something that lights up and they’re just randomly talking to themselves while they’re talking to people halfway around the world on their phones. That seems like science fiction, like fantasy, that’s the world we live in today. That kind of significant amount of evolution happening in technology would actually fit into the next 20 years because of something called the Law of Accelerated Returns.

Yup, agreed.

We’re speeding up in our technology advances and in actuality–at today’s rate of change–20 years is what we’re looking at to get the last 100 years of evolution. But in actuality, because it is continuing to speed up, it would actually fit into the next 12 which is mind-blowingly crazy, insane, to think about that.

Another way to express the same thing just to reinforce what you’re saying is, it took roughly 100 years for a telephone to get 1 billion users. It took 50 years for the television and eight years for Facebook and smartphones to reach 1 billion users. The rate of adoption is also accelerating the same way as the rate of available technology. There’s no evidence that the rate of increase is slowing down. This is the crazy thing. To think about how quickly all that’s going. You’re also right that we’re ill-prepared. We don’t know how to contemplate the advent of the singularity. The interesting thing though is if you went back 15 years ago and you looked at when Ray Kurzweil predicted the singularity was going to come, I think he was saying 15 years. Not to pick a bone with Ray Kurzweil, he’s amazing. Clearly, you’re right, the notion of singularity is coming. There’s usually these new kinds of technologies, some level of complexities makes it happen somewhat slower than we thought it would. We had the year of mobile six years in a row before the year of mobile actually happened. There are some layers to these things that people don’t even understand what they are yet. I’m totally onboard. It’s definitely coming and we’re not well-prepared for it.

Yeah. Wouldn’t you say that in five years time, SEO is going to be a very different ball game—one that’s very much driven by AI because Google is going to be very much driven by AI?

Well, by then, we probably will have AI solutions that know how to generate good content. That itself has been through amazing strides over the past two or three years even. It used to be a machine-generated content. It was unreadable.

Markov chain and all the spin content and everything, yeah.

Yeah. Whereas now you could get, you can still recognize it but it’s getting decent, and that capability’s going to keep growing. You get to the point where you get really insightful, intuitive, well-written, machine-generated content that addresses whatever question the person on the other end of the transaction wants to get an answer to—that is a game changer and that will become a very big deal. The game will all be about how do you set up your system to cover the right scope of topic that relate to whatever it is your business is. I’m still in the year, by the way, where I’m assuming where we have businesses that are human operated. I’m staying in the 5-10 year horizon era, not going out 40.

Yeah.

The machine intelligence on the other side will be the one that’s trying to interpret and tell us how to figure out what the best things to show in whatever serves as a search engine will be at that time. It’s amazing to think about all these stuff and how quickly it’s going. It’s crazy.

I think it’s important that our listeners understand that things are shifting pretty significantly because of technology advancing and the Law of Accelerated Returns which includes Moore’s law, Metcalfe’s Law, etc. To still be in here and now, focusing on the three pillars test SEO on creating really high-quality content, making sure it’s remarkable, link worthy, and using the various tools to do competitive intelligence, keyword research, all that sort of stuff. That’s the here and now stuff but it’s a totally different world. All bets are off, let’s say five years from now, that we’ll still be doing those same sorts of things because in a world of an AI, how do you outsmart an AI? Only with another AI. It’s going to be a very interesting future like the Chinese curse, “May you live in interesting times.”

Yeah, totally.

Let’s talk about some of the algorithms that have come to pass and the implications of these algorithms for example, Hummingbird, Panda, and Penguins, etc. Let’s start with Hummingbird.

Sure. Hummingbird, basically was Google’s first update into getting a better understanding of natural language—that’s kind of what it get really known for. A little less known was the same time, Google restructured the way their overall algorithm infrastructure was put together. What I mean by that is, was it 2015 when Hummingbird came out? Am I remembering that right or was it earlier? But whenever it came out, all the way back to 1998, Google had never restructured sort of the search engine part of their algorithm. A couple years earlier when they released the thing called Caffeine, that was about improving their crawling structure but Hummingbird was a rewrite of the search engine core and in it they wrapped the natural language capabilities and made the overall algorithm more flexible for plugging in, removing, updating individual pieces, to allow them to adapt the core algorithm approach more quickly. That was really Hummingbird. There were definitely some significant moves toward better natural language processing but they’ve done a lot more on that front since then.

Hummingbird came out or they announced it in 2013. A couple of years earlier. We would jokingly get asked the question like, “Who got the idea of the Hummingbird first?” You guys, as authors of SEO with a cover having a hummingbird on it, or Google. It was us. They stole the idea from us because we had the cover in the very first edition in 2009.

That’s the reason we get those lucrative royalty payments from Google every month.

Yes, right. What have you been smoking? There’s been significant evolution in the way that search queries have been interpreted and user intent has been identified by Google. Hummingbird was a huge innovation and then on top of that, what? RankBrain?

\Well, yeah. To stay in the same direction, yeah, RankBrain. Although RankBrain by itself, the edition 1.0 of RankBrain was misunderstood by many for couple of reasons. One, Google picked the pompous name of RankBrain. To set the record straight, it didn’t become the whole Google algorithm, it’s not the whole Google algorithm. It’s an important piece but that’s different than being the entire algorithm. The person that got interviewed by Bloomberg it was RankBrain, that was 2015, if I’m not mistaken. I may have that one wrong too but the exact years are not that important. They basically said that it was the 3rd most important ranking factor and they set off a huge firestorm of how everything had changed. More specifically, what RankBrain actually does the original RankBrain 1.0 algorithm actually does is it looks at your query. It allows the traditional ranking algorithms to run and calculate an output as to what it proposes the results to be. In parallel, it does what’s called the similarity vector analysis to compare those specific user query, define queries of very close similarity to it. Let’s say, historical search results performance, and based on that historical search result performance, the side weather to tinker with the output from the regular search algorithms. This is the reason why it works so well on long tail queries and has little impact on head term queries. I guess the more traditional algorithms do really well on head term queries. It’s easy to tune that for that. But the long tail queries–which they don’t have any prior search history on–just being able to look at and see some very similar query, not identically worded but very similar, and look in the past state, the three users who searched on that, they click on the second result, never on the first result, and maybe one click on the fourth result, and the intent implied by that means that maybe this query is more about what we see in the second or fourth result in those historical queries than what we showed as the first and the third—let’s make it change. That’s what RankBrain 1.0 did. I’m going to give you a really good example because it comes from our own research. If in 2015, in June of that year, you search on Why are PDFs so weak, the first result you got was a PDF file talking about why the Iraqi resistance to the coalition invasion was so weak. It’s complete mess because what the user really wants to know is they want to know about the security aspects of PDF files. In fact, the rest of the results or most of the results in the page were all about PDFs about the Iraqi invasion being weak. You fast forward to a few months after RankBrain came out and the first result is in fact, a spot on, targeted piece of content talking about encryption, capabilities, and limitations for PDF files. Then, I’ll fast forward to today and the first five results are on that topic. What you see is over time, they’re tuning the results and they’re getting far more on point. This is a perfect example of RankBrain 1.0 in action. I happen to think that in 2018, we’re seeing what I’m personally calling a RankBrain 2.0 which might be from an internal Google terminology perspective, incorrect. The algorithms, they appear to be tinkering with now, feel like they have some sort of similar aspects to it, to the original RankBrain. But they’re more targeted in head terms.

You did some studies, right?

Yup.

To analyze the before and after of–actually, I think Hummingbird was one of the studies that you did. You did a before and after—

No, it was RankBrain.

It was RankBrain? Okay.

Yeah, that’s where we discovered the Why are PDFs so weak query. I can’t remember the other queries right now, but there was one where tourists was looking for information about Malta and where something was in Malta, and pre-RankBrain, what Google will return was links to tourist sites of Malta. Yet, the verbiage in the query has enough information that a human easily recognizes that a good response would be a map. In post-RankBrain, you get a map. This tuning wasn’t really just about traditional web search results, they introduced other elements. Another one, there were movies where people would be asking about the rating of the movie and Google used to return these regular web search results. Very shortly after RankBrain started getting knowledge boxes with the rating of the movie. They don’t even need to go to a website. They just gave you the answer. This thing was cross many different aspects of Google algorithm. You can actually dig into local search, knowledge boxes, and decide where to pick out the best answer—the more directly answer to the users query. It was pretty cool to see. We identified in our data, we just did some poking about 150 queries that we thought that Google got overtly wrong in July of 2015 or June of 2015 or something. A month or two after the release of RankBrain, we ran those same queries and found out that on half of them Google’s understanding of the intent had materially improved. That’s a big win, if you could fix half of your overt misunderstandings.

That’s a big win, if you could fix half of your overt misunderstandings on Google. Share on XHow many queries did you run in the study?

The big part of the study was finding queries where Google was initially confused in what they provided as results. Keep in mind, we already had these queries in our own databases. We weren’t cherry picking queries knowing they’re going to run RankBrain. We really only found about 150 where we were able to identify that Google was clearly confused about the intent of the query. We looked through tens of thousands of queries to find those 150 examples where Google just literally blew the user intent entirely. They fixed about half of them.

How did you find those needles on the haystack? Was it a lot of manual labor? Did you use some sort of an AI or something?

For that, it was manual labor. I wished I could say that we had enough AI to better determine what would be a good answer to your query that’s been Googled in. We do a fair amount of experimenting with machine-learning type stuff here. We’re not at the point where we’re better than Google yet.

I think it’s just a matter of time before Google starts answering a lot more of the users queries with a direct answer because if an AI can write content as well as a human or good enough, then why couldn’t Google have an AI that writes the answer on a fly and bypass all the websites and just give them an answer.

The only thing it requires is that they have been able to get the information in a way that it’s not being derived from a third party and therefore subject to some level of copyright. That’s the issue that they have to get around from that perspective.

They’ve been doing pretty well with getting around that copyright issue so far, I think. They have been leveraging everybody’s IP for pretty much their entire existence and not paying them for it. I also wanted to point out that Google has a lot of queries coming in that it’s never seen before. What’s the percentage? 15% or something, I think?

20%, I think. It seem to creep back up again. It might be because of more voice search queries that the number has crept back up from where it was. It used to be 25% at one point, all the way back when they’ve announced universal search, and then it dropped to about 15% over time and has crept back up in their latest testament.

Yeah. That makes it very hard if you are basing your algorithms on historical data when you’re seeing all these new searches that never come across the Google data repositories before.

Well, that underscores why RankBrain was so important. People don’t like my description of it. It’s got to be more important than that. You don’t understand. This is huge percentage of all queries that they’ve never seen before. If they’re improving all of those, or significant percentage of those queries, it’s a huge improvement to the quality of their search engine.

Yeah, for sure. Tell me about some of the other studies that you’ve done analyzing various aspects of Google.

Happy to. One of the ones that we’ve done more recently–well actually, we’ve been doing it for three years now, we do it every year–there’s one focus on digital personal assistance. There’s that one. Then, there’s a related one that I’m actually going to talk about first and I’ll get back to digital personal assistance, is one where we look at what they’re doing with featured snippets. Let’s talk about the featured snippets one first. This is one where we look at 1.476 million queries—sorry, the engineer in me needs too much precision, I know.

Irrelevant precision.

Exactly. Thank you for that. I could’ve just said 1 ½ and nobody would’ve complained. We literally built a database with 1 ½ million queries which we thought would be likely to generate a featured snippet or at least be the kind of query where you might get a featured snippet. We pulled about half a million of those from Google suggest boxes by doing things like typing in, “How to…” to seeing what the autocomplete was and pulled a bunch of queries that way. That was one way we did things. But the other way we did it, again involved a certain amount of manual labor. In the future, I’ll use an AI to do this but back five years ago when we started doing this it was manual. Consider this idea, what if you built the list of 500 famous building? Then, you decided on eight questions you might ask about famous buildings like, “When was the Empire State Building built? Who was the architect of the Empire State Building?” These kinds of questions—“How tall is the Empire State Building?” You have maybe eight questions about each building. Then, you replicate that for historical events, for famous people, books, movies, and you pick all these various topics where there’s a very simple informational response possible which would be fairly simple to drive. We built up another million queries through these kinds of things. From how you describe it, you can now internalize for yourself, “Oh, okay. Now I can see why Eric is saying that Stone Temple thought that this might be likely to generate a featured snippet response.” We run those 1.4 million queries through a process every year where we analyze and find out how many featured snippets they have, how many of them have featured snippets, how many of them have knowledge panels which is sort of the information box on the right, or knowledge boxes which are queries in line with the regular search results similar to features snippets but without attribution, so they’re essentially public domain content. Not only that, we looked at, “Well, okay, it’s a featured snippet. What are the features? What are the images? Do they have titles? Does it have an embedded video?” These kinds of things. “What’s the domain authority of the site?” and any number of metric—literally hundreds of metrics for each page that we pooled. We pool all these data and every year we look at this and we do it for couple of reasons. One, this is to monitor the progress of Google and see how their usage of these features is increasing. It’s been a pretty steady increase over time. Also, to drive information about what causes Google to pick a particular page to show on a featured snippet. To understand that and develop some science around how that’s happening. It’s fair to call this a big data exercise because you can’t do it in excel. It’s not a huge data exercise but it takes some real work to do all these stuff. It yields really great data for the things that Google is doing with featured snippets. Also, to teach you some things about what you might do to get featured snippets, if that makes sense.

Yeah. How does this study differ from some of the other big featured snippets studies like the STAT Search Analytics one?

There are couple of other studies out there that focus on some different aspects of things. One of the ones I know about is really focused on trying to analyze the web pages that generate featured snippets, and that was done by AJ Ghergich, pooling data from SEMrush actually, which has some pretty good information on featured snippets. That’s like you use paragraph tags, you use bulleted list, and these kinds of things. That can be fairly useful. The STAT one is also trying to approach the general angle of how to get more featured snippets. In my experience looking at this, they aggregated at fairly high level, what the behavior is. What we have seen is, in our opinion, a bit too simple to model. What we’ve seen is that if I’m in the apartment’s web space, the aspects of the content Google is looking for is probably much more weighted towards bulleted list. Yet, if you look at date and aggregate, it will tell you the paragraph tags or the dominant coding structure you should use to get a featured snippets, Google takes most of their snippets from paragraph tags, it’s absolutely true, by the way. It’s more than half, in aggregate. But in the apartment space, it’s 70% of bulleted lists.

Yeah. But doesn’t the STAT study look at different types of search queries and compartmentalize them? Maybe not by the industry or topic but at least by things like qualitative queries, subjective queries, that sort of thing.

They do and they do a very nice job with that. The stats study and the one that I’ve mentioned from SEMrush and A.J. Ghergich and what we do, each kind of hit different parts of the puzzle. I think that’s actually really good. They’re all really good resources. If you’re really interested in featured snippets, it’s really worth looking at all three of them because they hit different parts of it. That makes for a greater hole. It’s worth though taking a moment because I think we should talk a little bit about, “Why do I care? Why do I care about featured snippets?” Different data that we have which has not been formalized into a study because we haven’t done it to scale, but we’ve done it across a number of clients. The great majority of the pages that we’ve looked at, they get identified as a source of a featured snippet. Those pages when they get identified as a source of a featured snippets increase in traffic. The fear people have, “My information is shown in the featured snippet. Yeah, I get the link. Google is stealing my traffic.” But what we’re seeing, more often than not, is a significant traffic bump often on the order of double.

But with an important distinction here, they’re still getting more clicks on their organic listing. Let’s say, they’re number two or number one or whatever for a particular query and that query have a featured snippet. They have the position zero featured snippet with a link there too. They’re getting more click through on the organic listing that’s below the featured snippet than they are on the featured snippet itself. Right?

Yes. I’m okay with that.

But I think there’s some important distinctions here that we need to bear in mind. It’s not just a binary question here like, “Is this a yes or no?” There’s a lot of distinctions here that are important to know. For example, you might want to have, instead of a paragraph snippet, you might want to have a list snippet because let’s say, you’re in the apartment space, there’s so many data points to share. At least, a bullet list would be clear than just a run-on kind of paragraph. But then, why not use a table instead? A table snippet would be way more superior and conveying all these tabular data around the number of bedrooms, bathrooms, and the size of the square footage than the price and all that. Big problem though that the number of table snippets are shrinking because this is about voice search that’s why Google cares. Google’s not going to read off an answer that’s a big bunch of rose in a table. It’s like 16%, I think is what STAT found to be the current number of percentage of table snippets. Out of the total, 16%, that’s it.

Yeah. You’re absolutely right. You created an important tie there. People wonder why is Google is investing so much energy in this featured snippet thing. One other element to add to the featured snippet story before I tie it back to voice and that is, Google is doing an amazing amount of dynamic testing of featured snippets. We have a study that we’re about to release that we did in partnership with STAT, to measure the level of thrashing of featured snippet listings. In case of some queries, it’s quite high. There’s one query that we found that in a 105 day period of time where we’re monitoring it daily, used 12 different websites to show the featured snippet result. That’s the extreme outside example. But Google’s doing a tremendous amount of testing because they’re developing the algorithms to enable them to determine the single canonical answer to a query. The reason why that’s so important is because of the difference between a web search environment and a voice search environment. In a web search environment, if I give you a set of search results that maybe have videos, images, maps. But generally speaking, I get 10 answers on the page. The perfect answer for me is not number one but in position number two or three. That’s not a horrible result. As a user, I find what they want. But in the voice world, there’s only one result. The way I like to put it, we just went from position zero in web search to position only in voice search. Google has to get that right. They have a heavy dependency on featured snippets from many different kinds of queries and they’re investing a ton in that area.

Bottomline is, if you are not investing in terms of your time, money, and energy, in taking some of these featured snippets, and taking from your competitors, you’re going to miss the boat.

You’re right. It’s absolutely what’s going to happen. This actually leads to our next study that I wanted to mention which is what we call our digital personal assistance study. This is one where we took an Amazon echo device. I’m not going to say the name of the personal assistant thing because I don’t want mine that’s sitting here in the office next to me to start talking to us. Google assistant running on Google home. Google Assistant running on a smartphone. The Cortana, personal assistant running on the Harman Kardon Invoke speaker, and Siri running on an iPhone. We took all five of those scenarios and we literally hand spoke, so to speak, 5,000 different queries to each one to measure how accurate they were and we recorded the data from those queries. We measured things like, did it attempt to answer it? If it attempted to answer it, was it a partially correct answer? Fully correct answer? Was it blatantly wrong? Did it tell a joke? Did it show images? Knowledge boxes? Featured snippets? All of that. We tabulated all that to get a score of which was the smartest. To go straight to the stunning conclusion, Google Assistant is the smartest, still. In the past year though, year over year, our friends at Amazon made a lot of progress in closing the gap and get a lot closer to where Google is. There’s still a gap but they did a tremendous amount to close the gap. Dark horse that people don’t talk about, Cortana also made a tremendous amount of progress. If you go look at Bing, they make a tremendous amount of use of featured snippet. They’re running the same ball game as Google is in their ongoing effort to catch up.

Personal digital assistants are really going to be our primary interface to our computers. It’s like the advent of the Graphical User Interface, the GUI, changed everything. We used to type in a command line prompt like c:/ sort of thing or on Unix programming in Bash or C Shelle or whatever, and now we just point and click. The same level of disruption is going to happen probably even greater by the advent of the personal digital assistance when they have taken hold. Linguistic User Interface or LUI, will be our primary interface to computers. I think it’s so important for our listener to grok this and a great write up of the future—the near-term future—of personal digital assistant, personal smart agents, is five-part article series written by John Smart. A futurist and just a brilliant guy. Also, somebody, I call a friend. He’s the author of The Foresight Guide, it’s an incredible series. Are you familiar with this particular write up?

I’m familiar with it but I have to confess that I haven’t read it. It’s something that I’ll fix because you’re prompting me again to do it.

I know you had a talk at SMX Advanced with Duane Forrester and I did send them the link to that. He just devoured the whole thing. He was so happy to have gotten that. I think you’ll find it quite enlightening. Anyway, now we got to worry about voice search. It’s not really voice search, it’s just voice.

Correct. These is one of the things that people get wrong. It drives me a little bit nuts because we’re all talking about voice search. Voice search is a part of it but if I pick up my iPhone right now and say, “Call Elizabeth Dill,” it’s my wife, that’s not a search, that’s a command and I’m using voice to do it. It gets to what you’re talking about, this whole idea of LUIs, Linguistic User Interfaces. I’m getting the computer to do something for me through a voice command. Some of those voice commands might be searches like, what’s a good recipe for how to make harissa sauce. Sorry, I just threw that one out because my favorite sauce to put on slightly burnt brussels sprouts, it’s amazing. If you haven’t had it, you should try it. That was definitely the site. Two other things I want to point out about the voice environment which would be good for the listeners to key in on. First of all, you’re going to realize that your personal assistant, it doesn’t live on your iPhone, it doesn’t live on your android device, it lives in the cloud. You will, in very short period of time, be able to access your personal assistant through any internet connected device just by talking to it. Google already has the technology to know–when I’m talking to Google assistant on my smartphone or Google assistant on my Google home device–to know that it’s me. There’s no login required. In theory, it’s not very far away, a year or two, I’ll be able to pick up your phone and talk to Google assistant and it will know that it’s me and not you—that technology is there. This whole concept of it lives in the cloud and it’s separate from devices is really important because it gives you a singular user interface, a singular LUI regardless of device. This is a big part of what it’s going to make it grow so fast. Then the other piece, is basically going to be the way that you will accomplish anything you do online. We’ve already seen the demos of these. It’s like managing your travel reservations or getting your restaurant reservations. Or commands, it will be able to do things like, “Hey, Google. I want to send flowers to my wife. I want them to arrive on June 25th at the home address. Make it roses. Bill it with the usual credit card.” You noticed how there was no navigation in what I said? It wasn’t some step-by-step process. I just described it in a very human way what I wanted. The next time I say it, I’ll probably order the way I put things together completely differently and it will still be able to parcel all that and bingo. It will happen. The roses will arrive and I’ll get some confirmation back and that’s the end of the transaction.

Google may not even need to deal with a computer to make that arrangement. It could pick up the phone and call the florist and speak to a human, make the request, give the credit card details, get the confirmation over the phone, and transaction complete because the florist happens to not have a website.

Right. Yeah, absolutely.

Pretty basic. I love that Google IO video where they demonstrated this in action. It was pretty crazy. If we take just the most tangible practical component of all these for somebody to implement today, it’ll probably be to do some featured snippets optimization, try and get some featured snippets, so that they will appear as the voice search results for various queries. Do you have a particular document you want to refer our listeners to and how to do it?

I’ll be happy to do that. The 3rd or 4th edition of our featured snippet study, don’t remember which one but I’ll look it up and I’ll give it to you, actually talks about a set of steps to go through to get featured snippets. At a very high level, pretty simplistic, find out what the commonly asked questions are for your potential prospective customers, brainstorm related subsidiary questions to those commonly asked questions, create a piece of content that addresses both the core question and the related question, and then, make it easy for users and Google to find and understand. That’s a very high level approach to it. Then the next step of that is to realize that there’s a difference between getting a featured snippet and keeping one.

Yeah, it’s so volatile as you said.

Right. Exactly. You got to understand that volatility makes sure your business strategy takes that into account. If you get one, kudos to you even if you end up losing it a few days later.

Or a few hours later.

Yeah, you could. You absolutely could. But then, that means you got close. Now, tune the content and keep doing the thing and get to the point where you really get it. I will give you a link to an article that talks about how they do this.

That’d be great. I also have a search engine land article about how to steal featured snippets from your competitors, how to get the list of the competitors’ featured snippets using a tool like SEMrush. Now, let’s completely switch topics because I know we’re getting close to the end of the episode here. Share with our listeners how you went from an EDU-lead gen based business or multiple businesses where you had, I guess, three successful exits to running an agency.

There were sort of two things that happened. One was that I had a certain amount of success in the EDU lead gen space. All of that success was done in terms all of the exits we generated were things which were really whitehat in their approach. What happened is we got to a point where we actually did in fact have a fourth venture we were beginning to do but it just got continually harder to create market-leading content which is how we were successful with seeing teams of people which is what we were doing at that time. The expense of being successful on that went off. The other thing that happened is between the partners I had in those businesses and myself. We had a divergence in viewpoint as to where the market was going. I happen to believe that it was going down a direction where it was all going to be about quality and authoritativeness. They wanted to try some different things. We just had a sort of divergence of opinion of that. All along while I was doing these lead gen businesses, I was actually doing some consulting because I was solo guide for most of the time so I had my foothold in the consulting side of things. That kind of grew, even while I was doing these publishing companies. It got to a point that I said, “Yeah, maybe I’m going to make the consulting the main thing.” Not only is it time for me to do something different but it’s really different because one moment you’re generating royalty streams effectively, annuity streams, if you will.

Like royalty streams from the book publisher O’Reilly? Or maybe a little bit bigger?

No, no. We ain’t living on royalty stream from that. Royalty is not quite the right word. You have a website asset to stay around money even if you don’t work today.

Yeah, passive income.

Yeah, passive income. Thank you. That’s the right phrase. I had all these passive income businesses, they’re very successful. It kind of got to the point where it felt like if I should do something else. My personality, if I’m going to do something else is going to be completely different. Consulting, there’s nothing passive about it. You’re there, like you’re hacking into that day-to-day. We’ve got a 70% agency and we worked with 15 Fortune 500 companies, some of the world’s traffic websites, we get to do some great stuff—completely different thing for my brain to work on. It’s fun.

Are you able to work on those sites or are you busy with speaking in conferences, writing and all that, and really don’t have time to get stuck into real world client problems?

For problems of strategic nature or particularly technical problems especially large clients, I do stay involved. What percentage of that time? I don’t know, 40% or 50%, it’s somewhere in that range. The rest of it is sort of the public face stuff, so there’s some balance there. By the way, I’m really happy that I can keep my fingers dirty, that really helps.

It is really important and as I run my agency in that concepts, I got farther and farther away from that where I used to. The reason why GravityStream, I was able to invent that because I was solving a real-world client problem. I was at Coles department store as a client. They were having a problem that I had came up with a solution that was based on reversed proxy. Then, another client that I was also on-site with, Northern Tool had an incompatible version of IBM HTTP server that we couldn’t do the rewrite rules in time for their code freeze deadline for the holidays. The only way around that was to use a reverse proxy which I already perfected in a development environment few months earlier for Coles. That was passive income. We were printing money because we were charging on a cost-per-click basis for SEO and interjecting all these SEO goodness without having to do a major invasive surgery to their e-commerce platform. But that would not exist if I had not had that client problem and I worked on it. By the end of it, like 2009, I was close to exiting, we sold it at the very beginning of 2010, I wasn’t working on any client. There’s no time. It was very frustrating. I was just starting the initial conversations with new prospects, I was speaking in a lot of conferences, I was working on the book, writing articles, blogging on my own site, and it’s like, “I don’t have any time for hardly a phone call with a client.” I’d hand him off immediately. It kind of sucked. I know that clients didn’t appreciate just not having access to me and I was glad to just completely change that model when I sold Netconcepts. Now, my clients only work with me but it’s not scalable. I can’t have dozens and dozens of clients like you guys have.

In the way, we try to balance that here, I got an amazing management team. There’s my COO, also happens to be my wife, but she is an amazing COO, Elizabeth Dill. There’s the rest of the management team that makes so much stuff go really, really well here. It gets back to the old maximum when you try to build a business. Make sure you’re hiring people smarter than you. I don’t mean to imply they’re smarter than you at everything but they’re smarter than you in what they do. Then you rely on them and you get those mechanisms to work. I’ve worked very hard to get to a point where I can still keep my fingers dirty but also do the speaking, the writing thing, and set a balance there.

It’s not just about having people smarter than you, surrounding you; it’s also about putting those people on the right roles. What Jim Collins would describe in Good to Great, is putting right people in the right seats on the bus.

Yup, absolutely. It’s incredibly important. You got to give them a chance to succeed. It happens all the time, that really brilliant people get put in, they get in the wrong role, and then it doesn’t work. You got to figure out what the right spot is and then give them that spot. You got to be willing to let them run with it a bit. What I tell the senior staff here is, “It’s your department and I’m here to support you however you can. But don’t come to me asking me how to solve every problem in your department. Come to me and tell me how you want to solve it. Then, if I have a reason to disagree or save you from making a big mistake, for some reason, then I’ll give you that input.” That’s part of what we try to do here.

Do you have any kind of assessment that you put these folks through when you’re onboarding them or when they’re still a candidate and you’re considering them?

We actually use StrengthsFinder.

I love that one.

That’s one thing that we do.

Did you find that out from me? Did I recommend that to you and that’s how you found out or what is somewhere else?

I don’t think I got that one from you. I’ve gotten so many different things from you, Stephan. But I think that one came in from someone else in the company here. It’s a wonderful tool. It’s pretty common. If someone comes to my office, and it’s not someone I talked to regularly, I’ll pull out our database of people here and I’ll take a look at their StrengthsFinder profile, “Oh, Analytical. Okay. Good to know that.” Sort of get the characteristics of them. It will change the way I communicate with them.

Yeah, smart.

I make an effort to frame things in there, the language, it’s a wonderful tool.

Yup. Any other tools? StrengthsFinder 2.0 is a book that comes with the assessment for free or you can just go directly by the assessment. But if you are not familiar with this tool just buy the book either in Kindle form or in paperback, I guess it might be in hardback. Then, we’ll include a test which would take about 20 minutes for the candidate or yourself to take. Any other tools relating to assessments?

In terms of assessments, I think that’s pretty much it. The other interesting tool that I might mention that we’ve found a boom in the company is the one called 7Geese—I guess it fits in the assessment category. It’s our way of managing interactions in terms of career monitoring, and career progression. We use 7Geese to do things like provide feedback, give people recognition, manage their reviews, set up a process for one-on-one where people set objectives for their careers, you can measure progress against those objectives. It’s a neat online tool where you can really manage employee progress over time. We’ve built the culture around using that to be the way that we create structure to continual assessment of how people are progressing in their career objectives and fitting in the organizational role. That’s a pretty cool one that we use.

I’ll give you a few more. You should try these because I use them all the time with new hires and it’s amazing. I do DISC assessment that you can actually take for free. He provides that for free and you get this amazing, maybe 15-page PDF report with so much insight on the person. First person to take this should be you so that you learn about yourself and get your team, your significant other, your kids, and everybody to take this. Another one is Demartini—Dr. Demartini’s Values Determination. This is very helpful. Again, it’s free. It’s on drdemartini.com. It’s very helpful to understand the hierarchy of values of a new hire. If let’s say, family is number one, travel is number two, that’s going to be a vastly different person than if, let’s say, church is number one for somebody and their kid is number two. The idea is to map the person’s job duties and responsibilities to their highest values. They can see the direct connection like, “Oh, if I can do this all day for you then that’s actually helping me be more connected to my church.” Or, “This is helping me to learn how to be a hacker of travel deals. I want to travel the world and this is really cool stuff.” That’s another one. Fascinate is Sally Hogshead test. I had her on Get Yourself Optimized Podcast. I’ve had her on this podcast and it’s an amazing episode, I strongly recommend it. This is a different kind of test because it’s not about how they see the world; it’s about how the world sees them. They’re 62 different archetypes. It’s pretty fascinating. The last one I’ll give you, Eric, is Kolbe. Have you heard about Kolbe?

I have not.

Okay. Dan Sullivan is big into Kolbe assessment that’s how I found out about it. He’s a business guru—amazing guy. Kathy Kolbe was the creator of this. You can find out if the person is a fast start or they’re more of a finisher, one of these different again, kind of archetypes. That is incredible powerful as well. They’re all different enough and valuable enough that I recommend you have at least your important people go through a whole of them. They’re as valuable as StrengthsFinder.

Yeah. Cool. We’ll take a look in all of those because we’re always interested in these ways of evaluating things and thinking about things and really putting them into work with people. They don’t realize how much value you can get out of these different ways of analyzing things and understanding what makes people tick and what makes them different. Awesome.

Alright. We are out of time. How can our listeners get ahold of you? Where should we send them?

I’ll give you three different ways. www.stonetemple.com is the website for people interested in that. Twitter account is @stonetemple. I’ll be happy for people to email me to at [email protected].

Perfect. Thank you so much, Eric. It was a blast. I’m sure that our listeners are now armed with more information that they can use to improve their rankings in Google. Thank you for that.

Alright listeners. Now, we’ve got to take some actions on what you’ve learned and to do that, go to marketingspeak.com. That’s all at marketingspeak.com. Go check it out and we’ll catch you on the next episode of Marketing Speak. This is your host Stephan Spencer, singing off.

Important Links

Connect with Eric Enge

Apps/Tools

Articles

Book

Business/Organization

People

Your Checklist of Actions to Take

- Prioritize user engagement and satisfaction before I start optimizing my website.

- Brainstorm what the frequently asked questions are for my prospective customers.

- Create high quality, remarkable content that aims to answer my prospects’ and readers’ questions.

- Be prepared for the machine learning algorithm but don’t believe everything I read. Always research trends and updates on my own.

- Make sure that my website is optimized for mobile given that most online users use mobile phones.

- Stay relevant and keep evolving my SEO knowledge by doing research, taking advanced classes and joining masterminds.

- Remain intuitive on human behavior by continuously getting customer feedback, staying on top of current events and following the latest fads and trends.

- Aim for rank “0”, also known as featured snippets. Learn how to achieve this through Stephan’s Search Engine Land article.

- Explore voice search optimization and see how I can incorporate these strategies into my projects. More people are using their voice instead of typing their queries than ever before.

- Grab a copy of Eric Enge, Stephan Spencer and Jessie Stricchiola’s book The Art of SEO.

About the Host

STEPHAN SPENCER

Since coming into his own power and having a life-changing spiritual awakening, Stephan is on a mission. He is devoted to curiosity, reason, wonder, and most importantly, a connection with God and the unseen world. He has one agenda: revealing light in everything he does. A self-proclaimed geek who went on to pioneer the world of SEO and make a name for himself in the top echelons of marketing circles, Stephan’s journey has taken him from one of career ambition to soul searching and spiritual awakening.

Stephan has created and sold businesses, gone on spiritual quests, and explored the world with Tony Robbins as a part of Tony’s “Platinum Partnership.” He went through a radical personal transformation – from an introverted outlier to a leader in business and personal development.

About the Guest

ERIC ENGE

Eric Enge was named by the US Search Awards the 2016 US Search Personality of the Year, and was also the Landys 2016 Search Marketer of the Year. He is also co-author of The Art of SEO (published by O’Reilly), along with Stephan Spencer, Rand Fishkin, and Jessie Stricchiola. Eric keynotes many industry conferences and is the CEO of Stone Temple Consulting, a 70+ person digital marketing agency based in Massachusetts.

Very interesting and nerve wrecking view on the impact AI is going to have on SEO and content creation that bit about Google answering questions directly sent chills up my spine, but now that you pointed out I think it is very probable. Anyway, great content from all the episodes I’ve heard so far. Cheers.