You might not realize the difference between HTTP, HTTPS, and HTTP2. That sort of information is generally reserved for SEO experts. But understanding the difference is more important than ever. Chrome users will soon be warned that sites using HTTP are no longer secure. How can you protect your site? You can make the switch to HTTPS. At least, that’s the short answer.

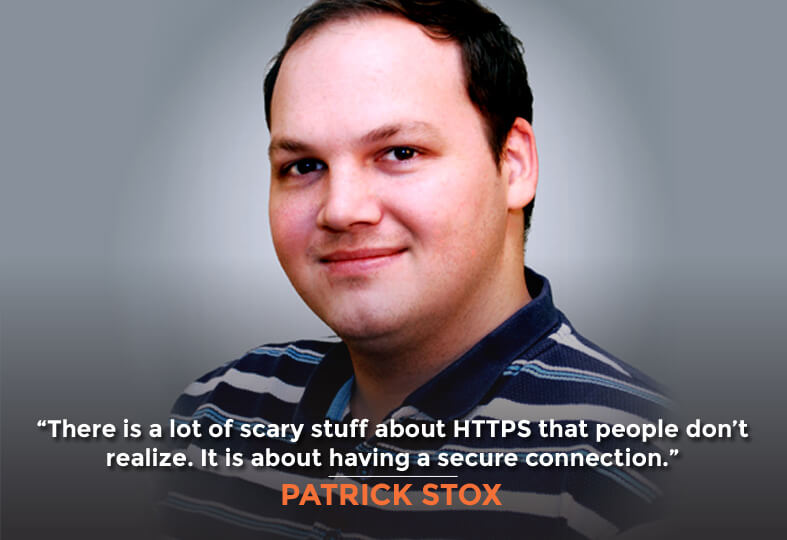

Join me and my guest, Patrick Stox, for many more details on HTTP. You’ll learn SEO and development information that you can use to make your site run smoothly. If these aren’t things you can implement yourself, you’ll learn exactly what to ask your developer to do. Patrick is the technical SEO for IBM and the organizer of the Raleigh SEO Meetup. Patrick and I both recently spoke at Pubcon, where we recorded this episode.

In this Episode

- [02:32] – Patrick talks about the massive size of the IBM website. He then discusses some of the big issues in maintaining a site that big from an SEO perspective.

- [04:58] – We hear why one should use hreflang tags on their website.

- [07:05] – What are Patrick’s preferred tools for crawling?

- [07:44] – Stephan compares building a website without an SEO involved to building a house and then realizing you didn’t specify that you needed electrical wiring.

- [09:34] – What sorts of documents does Patrick use to get everyone on the same page? He and Stephan talk about the problems with having extensive documentation.

- [14:26] – Patrick talks about the potential issues with HTTP, and explains why seeing ads on a site that doesn’t normally have them is potentially really scary.

- [17:09] – What are the reasons you need to go from HTTP to HTTPS even on your personal blogs?

- [19:13] – HTTPS is a small ranking factor in SEO, Patrick explains, which is basically a tiebreaker between equal sites. He isn’t sure whether its importance as a ranking factor will be increased.

- [20:50] – What are some of the biggest mistakes that Patrick sees with HTTPS migrations?

- [23:43] – Patrick talks about what content delivery networks (CDNs) he comes across most frequently, and which are his favorites.

- [26:23] – Patrick defines service workers for marketers who are listening and may be unfamiliar with some of the geekier SEO terms. He then explains the benefits of minifying JavaScript.

- [28:49] – What are some of Patrick’s favorite features within the Chrome Developer Tools?

- [33:05] – Stephan talks about the painful procedure that was involved in removing an SEO CDN, and talks about his invention, GravityStream.

- [34:47] – Now that we’ve covered HTTPS, Patrick moves on to talking about HTTP2. Its main benefit is that it makes things a lot faster.

- [37:28] – Patrick and Stephan talk about server response headers, and Patrick offers some tips and tricks.

- [42:58] – We hear about Patrick’s use of Ryte.

- [44:04] – Patrick elaborates on frameworks in SEO, discussing specifically React and AngularJS.

- [46:57] – Stephan asks Patrick a question: what would be his SEO advice and best practice suggestions to a listener who is using infinite scrolling?

- [48:12] – Are there any other server response header or redirect tips that Patrick wants to share with listeners?

- [49:54] – Patrick and Stephan talk about Google’s SEO best practice document, and discuss whether H1 tags have an effect.

- [52:57] – Stephan explains that he is very outcome-focused rather than activity-focused like most other SEOs.

- [54:56] – What’s an example of one of the scripts and tools that Patrick uses to automate and scale across the 50 million pages at IBM?

- [57:25] – Patrick is pretty picky about side projects, but if you have a difficult problem that you can’t quite figure out, he may be able to help. He directs listeners on where to find him.

—

Transcript

I hope you’re ready because we’re going to geek out on some technical SEO. You might think oh no, I’m just a marketer, I don’t want to geek out. Stick around because this is a very valuable episode. For example, we’re going to be talking about migrating to HTTPS. If you haven’t already, you need to be on HTTPS. The Chrome error messages that users are going to get is just not worth staying on HTTP. Inaction is not an option. We also are going to be talking about HTTP/2 which is the evolution of HTTP and you’re probably running on the old version and your website is running a lot slower than it should be because of that. You’re going to need to know about that and other pagespeed optimizations, things like minifying JavaScript and CSS, stuff that you may not do yourself but you need to ask your developer to do for you. This is all really valuable information, and the person who’s going to walk us through all this is Patrick Stox. He’s the technical SEO for IBM, he writes for many search blogs, he speaks at many conferences. He just spoke at PubCon which I also spoke at and we sat down at PubCon and geeked out about all this technical SEO goodness. That’s what this episode’s all about. Patrick is an organizer of the Raleigh SEO Meetup which is the most successful in the US and it was founder of the technical SEO Slack group. Patrick, it’s great to have you on the show.

Hey, thank you, it’s great to be here.

Let’s geek out, but before we do, let’s set the stage so we’re not scaring away all the non-technical marketers because they’re going to need to listen to this too so they know what to ask for from their developers, their systems administrators, their technical SEO for people. This is critical stuff. If you don’t get the foundational stuff right, you don’t have a platform to build upon and your SEO is going to suck. One of the things that you do at IBM is you figure out ways to scale across a very large website. You’re always looking for ways to shortcut things but in a good way, not shortcut as in getting stuff that’s not perfect but ways to scale so you don’t have to touch manually hundreds of thousands or millions of pages. How big is the IBM website?

That’s a good question. I would say north of 50 million pages, it’s hard to get a good count.

Holy cow, that’s huge.

There’s a lot of different subdomains that we don’t have a lot of insight into so it’s hard to say exactly how big it is.

Wow. 50 million pages, give or take millions, what are some of the big issues when maintaining a site like that from an SEO perspective? Are you having to do a lot of migrations, or are you having to just maintain things, redirects, and so forth? What are you up to?

All of the above. It’s a really complex system where there’s a lot of infrastructures, most people don’t have to deal with more than one. There’s really a ton of different CMS systems, JavaScript frameworks. I think I’ve counted 22 different CMS systems and a lot of those have multiple installs. A lot of the times, it’s getting things to work together. We have a corporate redirect engine that works with most of the systems, not all. There’s a lot of middleware to try and get systems to talk to each other. If we make a change in one, it can be across multiple systems.

Right. Just simple stuff like the order that you call different JavaScripts and CSS files with an HTML page can really drastically affect the page load speed which then affects SEO and conversion. When you say middleware, you’re talking about things that are connecting different systems together like for example I connect up GoToMeeting with InfusionSoft using PlusThis. If I didn’t have that middleware solution, I wouldn’t be able to communicate between those two software packages.

Yeah, like imagine for hreflang tags for instance, trying to get that to work across multiple CMS systems, it’s hard enough to get right in one system. People screw it up all the time. Trying to get it across multiple, you can either try a manual approach, that will never work, or set up some middleware so the systems can talk to each other. If you make a change in one, it rolls out to the other systems.

We need to define hreflang for our listeners who are not familiar with it. The purpose of that is for internationalization. Why don’t you describe why you’d want to have hreflang tags in place on your website?

For the most part, it’s to make sure that the language version, or if you have a specific country language version shows in the right geolocation.

French Canadian versus French French.

Right. French Canadian is always a hot topic because they’re legally required to have that one.

Yeah. If you get it wrong, you’ll end up with the wrong version getting served up or maybe Google could get confused and see some of these different country versions as duplicate content.

Yeah, it’s always fun when that happens if you don’t actually localize them. Google basically folds the pages together and only one of them will really be in the index. You might be routing people to the wrong country, just one way you can route people to the wrong country.

Right. There’s also targeting international, targeting that you can do inside of Google Search Console. Are you doing that as well or are you recommending that to our listeners?

I would definitely recommend that as a best practice. But in reality if you have a lot of country language versions and hreflang tags, it’s not going to help a lot, it’s still going to show wrong versions if pages get folded together. It’s actually quite common on our side, unfortunately, it causes a lot of issues.

You probably have to run scripts or tools across this very large website and all its subdomains on a constant basis to make sure that things are not breaking or people didn’t go in and undo some SEO goodness that you had created, right?

Yeah, it’s never enough. We do run a lot of automated testing, lots and lots of crawls. We have lots of change monitoring systems because things aren’t communicated as well as they could be usually.

You’re crawling your very large site and various subdomains of it using tools like what? ScreamingFrog, React, Botify, DeepCrawl. I use DeepCrawl for a lot of my client stuff. What are your preferred tools for crawling?

Pretty much all of the above. DeepCrawl for instance we use a lot but it breaks down on the JavaScript frameworks day. I think they’re adding JavaScript, the ability to crawl JavaScript, but they still don’t have that. We have a lot of dynamically generated systems like our support center, we have a few different things like our marketplaces in React JS. It can handle React okay as long as it’s done well, but certain parts of our site are not. There’s another part that was built with Meteor JS which is Node JS framework. That, I don’t think, should ever be a website but they did it anyway.

Yeah, trying to reign in people building these websites can be pretty hard when it’s built in a very search engine unfriendly way. I use an analogy to convey to prospects and clients the danger of hiring somebody who doesn’t understand SEO to build your website and not having an SEO involve in the process. It’s like building a house and then realizing after the fact that you didn’t specify “I need electrical wiring” in the house and then you have to tear out the drywall, wire the electrical in so that you can turn the lights on, patcher up the drywall. It’s very expensive, and a very stupid mistake too. That happens all the time in regards to SEO and sight rebuilds or new site builds, it’s a real mess.

We try to get in front of it and we have lots of standards, best practices. There’s 10,000 different developers for IBM, so that makes it a little hard to get to everyone. With churn and everything, there’s no way that you’re in front of everyone you need to be.

Right, so there must be a whole raft of guidelines, documents on best practices, and things to avoid.

Technical trainings, webinars, educational materials. We go speak and educate, but it’s still such a large organization. A lot of times, people will talk, “Hey, go buy your dev pizza.” I can’t buy 10,000 devs pizza or beer.

Yeah, got it. What sort of documents do you use in order to get everybody on the same page? I’m sure it doesn’t work across 10,000 developers because not everybody reads everything or watches every training video, but do you have the equivalent of branding guidelines or style guides for SEO?

Yeah, we have a lot of best practice documentation. Obviously, IBM will have their own style guides and branding guidelines as well for the content folks. We have probably too much documentation, maybe that’s part of the issue. There’s so many rules, how can you remember everything?

I remember Bill Hunt saying—Bill, a fantastic SEO who also helped IBM out for a long time, he was also a previous guest on this podcast. Great episode, listeners, by the way, the Bill Hunt episode if you want to geek out some more on SEO. He described how having these huge binders full of monthly reports that took 12 hours to create, human labor, 12 hours for a very talented SEO such as himself to create, and then nobody would read it. And then he would start to test this theory by inserting pictures of Mickey Mouse and stuff into the binders, into the reports. For 14 months, nobody would comment on this until one new person finally said, “What are these Mickey Mouse things?”

That’s great, I haven’t heard that before.

Yeah, really funny. He said one of the solutions to get the upper management to consume the information is to find three big wins, three challenges, and three important actions to take and to put that into a three-minute video. Pretty genius, right? Then, he actually heard upper management talking about, parroting back some of these key initiatives. “Okay, we really need to move on this project because it’s been stalled for six months,” or whatever. They would know that from the video. They have time to watch a three-minute video. These overwhelming documents just don’t get read by anybody.

Yeah, we try not to overwhelm with the documents. We’ve used pretty simple dash boarding. I know for a fact that execs do look at those in detail every week.

That’s awesome.

We try and do the wins also. That’s always great. It helps not only us but other teams. If someone was willing to work with us and get things done, changed, then it was a success, we give as much credit as we can to them because that makes them look good also and they’re more willing to work with us in the future.

Another person you probably know from IBM is Mike Moran, he worked there for many, many years, really great guy, co-author to Bill Hunt on Search Engine Marketing Inc. He said that he would create these dashboards and he called it management by embarrassment because the dashboards would have all this red if the business unit owner did not follow through on the SEO initiatives. They would complain to the developers or whatever, just make these red boxes go away because I’m sick of hearing this month after month in front of my peers at the monthly meeting. I love that, management by embarrassment. Have you heard that one before?

No. Mike still consults with us but that’s still kind of a thing. There’s what we call top charts and basically it’s like here’s how well each business unit is doing compared to the others. It’s not really a fair comparison if you think about it, something like Cloud is a lot different than analytics or services or something. It’s not great but it gets the point across, you’re right.

Yeah. Let’s geek out a bit on things like HTTP/2 which probably a lot of our listeners don’t even know what that means. They should care, they should care about page speed, they should care about upgrading their systems to PHP7, the latest versions of their server software if they’re running Nginx or HTTP server. They need to know what to ask their systems administrators and developers. Let’s start with HTTPS. You’re a big proponent of that, you’ve written about this multiple times, I think you have a search engine journal article about HTTPS, is that right?

Search Engine Land, yeah.

Did you write for Search Engine Journal as well?

I think I wrote maybe something about HTTP/2 for them.

That’s what I’m thinking of. HTTP/2 article on Search Engine Journal and the HTTPS one on Search Engine Land. Let’s start with HTTPS because there’s a recent development where it’s not really an SEO development but it really freaks people out now when they’re getting these messages, security messages in the Google Chrome browser that the site’s not secure. Let’s talk about that because that can cause somebody to bounce out of your website, right?

Yeah, absolutely. There’s a lot of scary stuff with HTTPS that people don’t realize. It’s not really securing your website like people think, it’s all about the trust of the connection. That’s huge. It’s not going to prevent your website from being hacked or anything like that, but it can prevent things like hunt and injection. You see it a lot in hotels, airlines. If you’re using their wifi, you might see ads on websites that don’t have ads. That may not seem like a big deal to people, they’re like it’s just an ad, I ignore it anyway. What happens if they change the content on that website? We’re in a highly political environment right now. What if companies decide that they want to change people’s perception and they could influence a presidential campaign for instance, that would be a huge deal. People using their connections at airports, or if your home ISP wanted to, they can do this. I’ve seen it before, actually. My mom had an ad injected on Jennifer Slegg’s site, the SEM post. I’m like there weren’t ads on that site at the time. It was just to get a discount on blah, blah, blah. It was directly from her ISP. The ads don’t really scare people, like I say. But if they do change content, they have full control over that page. You can inject new paragraphs, they can change the message. They could censor content, which is really scary if you think about it.

Right, but there’s also bad actors out there that could hijack the connection and ask for you to log in again, get your password, and then empty your bank account. That’s if you’ve already secured your site, that’s still a risk, secured it with HTTPS, it’s not fully secured. It’s just the HTTPS protocol or SSL. Let’s first start with if you don’t have HTTPS at all, you should switch to HTTPS, right?

Absolutely, yes.

What are the reasons why you need to go from HTTP to HTTPS? The majority of websites on the internet are still on HTTP. Up until just recently, my regular personal blog was on HTTP, I just recently switched to HTTPS. stephanspencer.com is now HTTPS. Why should people’s personal blogs and their brochureware websites that don’t have any ecommerce or any kind of need for credit card numbers to be inputted or any kind of secure information to be inputted, why do they need to switch to HTTPS?

I’m going to use your blog as an example. Would you care about the numbers on your blog for traffic and where that traffic is coming from?

Of course.

That is one of the main arguments I use for people to switch. There’s all this rise of dark traffic, dark social, blah, blah, blah, not seeing the refer. The refer gets dropped is you switch from HTTPS to an HTTP. If the site that the person came from is on HTTPS and you’re not, you can’t really see where they came from. It shows in Google Analytics for instance as direct, other analytic platforms actually show it as no refer. Those numbers are important, you want to know where people came from.

Yeah. Essentially, you’re removing optics, the optics that you have around where your traffic is coming from is now gone if the traffic is coming from HTTPS sites, secure sites, to your insecure HTTP site.

Absolutely.

Alright, that’s a very important reason. What about the scary messages that you get that you’re entering a site that’s not secure?

That’s new and I don’t know that people are scared of that yet.

Okay.

It’s still gray and not very noticeable, but they’re going to put a big, red symbol up there soon, I think early next year.

This is something where it’s going to get more and more alarming to just regular web users who are using certain browsers, I use Safari and I’m pretty sure they’re going to be rolling out changes there too to make it more alarming to users that, hey, you’re entering an unsecure website now. There’s user considerations, we want to make sure people are not freaked out, there are analytics considerations as we just discussed. What about SEO considerations? HTTPS is something that Google has been advising websites to migrate to for years now.

Yeah. It is a ranking factor, a small one.

Yeah. Small one. It doesn’t really move the needle if you move from HTTP to HTTPS.

Yeah. The way they describe it is more of a tie breaker.

Yeah. It’s very minor signal and yet I would imagine that it’s going to get dialed up over time, that’s not going to decrease in importance, as a ranking signal it’s going to increase, right?

Yeah. I’m not sure if they’ll increase it or not. I think the stuff that the Chrome Browser Team is doing is going to be scary enough to make people switch. Maybe the search team doesn’t need to increase that as a ranking factor.

Okay. Honestly, we’re all conjecturing here. We don’t know what Google engineers are planning. In fact if an SEO tells you that this is coming around the pipe, that Google’s going to implement it, turn and run. They’re either just parroting something that has been posted, let’s say the Google headmaster center blog and that’s okay as long as they’ve cited as like according to this comment from John Miller at Google or whatever, then that’s fine but if they’re conjecturing and they’re not saying this is conjecture, turn and run because an SEO who thinks they know what Google’s going to be doing, that’s a dangerous thing. HTTPS, really important. When you migrate from HTTP to HTTPS, you can create a whole lot of havoc if you don’t do it right. If you’re not thinking through the different SEO issues that you could end up creating inadvertently, that will create some havoc. What are some of the biggest mistakes that you see with HTTPS migrations?

It’s probably coming from common SEO recommendations to not switch to HTTPS until you’re doing something else, like a full site redesign or migration or something else. You had more moving parts into the scenario and things are going to go wrong. Straight HTTP to HTTPS is pretty simple, it depends a lot on the system and that kind of thing, I don’t want to say it’s simple always because it was not simple on IBM. We just did that in early April finally but that was after planning for well over a year and a half just to get that done, and it’s still not fully migrated over, so many different systems. For the most part, there’s a few things you might have to do in your CMS or server level and then do some redirects and that’s about it. As long as you don’t screw up those redirects and that’s the only thing you did, you’re not going to see much of a difference.

Redirects are going to be critical. What about going through and revising all the links on your site? If you’re using absolute links that are HTTP, switching those to absolute HTTPS links.

Obviously I would do that but you can usually do that in bulk, there’s a lot of plugins for instance, a lot of people on WordPress, there’s Velvet Blues URLs that allow you to update all the URLs in bulk. I would be more concerned with images and resources polled through JavaScript and CSS, but there’s something really cool most people don’t know about for that, it’s called Content Security Policy. You can set that at the server or to your CDN level to say auto upgrade and secure request.

Okay. Tell me more about that. What happens when you do that?

Any image on your entire website that is linked in securely with HTTP will automatically be rewritten and upgraded before the user sees it.

Oh, nice. If you don’t do that? Let’s say an image loads or tries to load from HTTP because you didn’t switch anything over and the page is on HTTPS, what happens?

Then you’re going to get a warning, you’re not fully secure.

That’s not good. The user’s going to see that error message and they’re going to freak out a little bit, maybe.

Right.

Okay. You mentioned CDN. Content Delivery Networks, would Cloudflare be considered as CDN in your mind?

Yeah, absolutely.

Okay. What CDNs d you come across most? There’s Akamai, there’s MaxCDN and Cloudflare and so forth, what are the ones you’re most commonly addressing and which ones are your favorites?

There are so many. The common ones probably are Akamai and Cloudflare at this point, there’s a few others that you see see with different things like Fastly, with a lot of JavaScript stuff because they compile a lot of the stuff for you. Cloudflare is probably one of my favorites and I probably shouldn’t say that because we actually use Akamai at IBM. Cloudflare does a lot of really cool stuff for you. You mentioned about the upgrade from HTTP to HTTPS, there’s a button for that, literally. I kind of hate this but I kind of love it also. They have what they call flexible SSL which makes the connection from Cloudflare to the user secure. You don’t actually have to put that certificate on your server at that point, it still looks secure to everyone out there, it’s not in the unsecure but for someone’s personal blog, you can do that, set a couple page rules and be done in five minutes.

You don’t even need your own SSL Certificate for your website, you can just use Cloudflare’s.

They actually do give you one and if you want to install it, I would recommend that but the flexible SSL will also pass all the sniff tests if you will, and it’s a super easy setup for personal blogs, sure why not. If you’re not taking anyone’s secure details or contact information or anything, do it.

Wow, cool. Alright. What are the benefits from an SEO perspective of using a CDN, a Content Delivery Network that will speed up your images and things loading because they’re coming from a more nearby data center and a more robust set of servers and connection and all that?

I think you hit it already, speed. Speed is the key. But they do so much other cool stuff too though a lot of times minify code or combine JavaScript CSS files. There’s really a lot of different thing you can do. One of my personal favorites that I don’t think it’s used enough, it’s actually just off loading your redirects to the CDN level. Rather than having your server process and read through files, you can cache those on the edge. It makes processing, that was a lot faster. Cloudflare actually released service workers which lets you run JavaScript on the edge now. Also, it’s just really cool. Cloudflare Workers, I think they call it.

Remember we have some marketers on the show listening who are not familiar with some of the super geeky stuff like service workers, can you define that for our listeners?

It basically lets you run JavaScript to do anything. A lot of times SEOs might recommend something like Google Tag Manager to inject some code. That means tag manager has to load on the page and then rewrite things there. If it happens at the edge, with Cloudflare for instance, you’re rewriting before the user ever sees it. You can run all sorts of cool scripts and stuff on that too.

Very cool, alright. You mentioned minifying JavaScript and combining JavaScript files and so forth together. Let’s explain what that means because minifying, we know what that means, you’re basically removing a lot of the unnecessary bloat in the code, you’re abbreviating the JavaScript or you can do this with CSS as well, a cascading style sheet. What’s the benefit? Is it a big benefit from a page speed perspective? And is that something our listeners should start with or have their developers start with to do some pagespeed optimizations?

It’s absolutely huge for page speed. One of the reasons websites are a lot slower to me than they used to be is because we’ve bloated them, we have 10 different style sheets, 20 different JavaScript files code. Each one of those is a request back and forth, it’s a handshake with the server to request that data. If you can reduce that to one, even though it’s maybe still even the same size, it’s going to get you a lot faster because it’s not having to take the round trip time to request all the files.

You can see what’s happening in terms of all the back and forth and the waiting for one thing to finish before the next thing loads, that’s called the waterfall graph. What are some of your favorite tools for checking the waterfall?

Just Chrome DevTools or webpagetest.org.

Yes. Chrome has a built in tool for checking page speed stuff and doing a lot of different kinds of analysis, looking at the server response headers, whether it’s a 301 or a 302, looking at if there’s a redirect chain, you can see all that inside of Chrome. Which is a browser most of our listeners are probably already using, they just haven’t started exploring the developer tools section inside of there. What are some of your favorite features inside of DevTools, inside of Developer Tools?

For me, I’m usually troubleshooting. You mentioned the header response, that one’s huge. Also just being able to see where you got redirected to, you can actually stop the page from being redirected, turning off JavaScript obviously to make sure it works for people that have that disabled, which a lot of people do because of ad blockers and that kind of thing now.

Yup.

There’s really just so much in there. Being able to read the DOM is the big one. The DOM is the Document Object Model. Everyone thinks you’ve used the words, that’s the HTML, that’s what’s on the page, it is and it isn’t. That’s the code but the process code goes into the document object model. And being able to check that if you use Inspect, Inspect Element, is really huge because things can happen, like the head section can break, throwing a canonical tag into the body and it won’t work there. That happens all the time, people don’t realize that but a lot of times a script in the head breaks or any number of things really can cause it. Being able to actually look at the DOM will tell you what happened.

Right. Back in the day, when I was doing a bit of coding myself, I wrote reversed proxy based software as a service, SEO platform. In the prototype, I’d used proxy based rewrite rules on our client’s web server to then call on our web server to do all this SEO goodness. We would rewrite URLs, we’d do all sort of cool stuff that could take a year or two for them to implement if they were just going through the regular dev processes. There’s so much bloat and red tape, bureaucracy in these large corporations. We did an end run around all that. In the first version that I wrote, the prototype, it just used regular expression, pattern matching, and search and replace process on the HTML but then we got more sophisticated, give it to my developers and then we processed the DOM, the Document Object Model, and we got even more sophisticated in the kinds of things we could accomplish for SEO without involving the developers over at our client’s side. That was really cool, in fact that was a big reason why my previous agency got acquired. We actually grew that side of our business to the majority of our revenue. It was on a pay per performance basis. So cost per click SEO, $0.15 a click and it was crazy how much money we were making off of that. An order of magnitude more in SEO revenue than we would have made just doing SEO consulting. Pretty cool.

That’s awesome. You were really ahead of your time there because I feel like those types of systems are just now becoming more popular. [00:31:52] is one most people know but they pitched that weird, it’s like an AB testing platform but it basically gives you full control of the website delivery before it’s delivered.

Essentially a CDN with SEO capabilities.

Or it sits in between the CDN, yeah it could act as a CDN also depending on the system. I think RankSense is another one that can be [00:32:13].

Yeah. That’s Hamlet Batista’s platform, yup. RankSense is a CDN with SEO capabilities, for sure.

Even now, it really is cutting edge. It solves a lot of problems with dev cycles, being able to have that level of control is unreal but it’s also scary. I think that’s maybe why they pitch it differently than I would but it’s because they probably don’t want to say, “Hey, you’re giving your marketer full control of your website instead of your devs.” It’s kind of a patch, too. It’s what happens when that gets removed? And then there’s going to be issues. I don’t know if anyone’s really added permissions like only this person can change this one thing, that kind of thing. That’s kind of the issues, those platforms they dissolved to get better at option.

It really was a painful procedure to remove an SEO-CDN like my platform, GravityStream, we did have an easy out option, but we charged for it. We would setup all the redirects from the optimized URLs that were in Google to their native pages which didn’t have any of the SEOs, they turned off our platform, turned off GravityStream and they lose all the SEO goodness but at least if they went with this friendly advanced option for removal, then all the redirects would be mapped and they wouldn’t lose any traffic, they wouldn’t have to handle the redirects all on their own. Then there are other platforms that weren’t so friendly about how you’d remove. You’d feel like you were held hostage essentially, because if you turn that off, all the SEO goes away and you go from top of page one for a bunch of keywords to who knows where.

I wonder how some of the others handle that. I think [00:34:13] just launched a new one too, un-something but I can’t remember the name of that.

That was 14 years ago that I invented GravityStream and now it’s becoming a thing.

That’s incredible.

Yeah, alright. HTTPS we talked about, let’s talk about HTTP/2. Because this is something that a lot of our listeners probably are unfamiliar with. They’re running an HTTP/1.1 and they didn’t even know it and they should be on HTTP/2. Let’s talk about that.

Yeah. It’s basically a new internet protocol that makes everything a lot faster, that’s the main benefit. To get that benefit, one of the things is you have to go to HTTPS, right?

Yup. What happens when you move to HTTP/2, as far as what can load in parallel and so forth. Let’s geek out a little bit about why it’s so much faster being on HTTP/2.

Yeah. With HTTP/1, it’s a lot of round trip request, with HTTP/2 it’s like let’s make a connection and now give me everything rather than requesting each one, enclosing that connection requesting the next one, closing the connection. It’s like request the connection, now give me the files.

Cool. Do you see many sites on HTTP/2 or is it still very uncommon?

I think it’s becoming a lot more common. Cloudflare really probably tripled the size of the number of people on HTTP/2 last year, I think about this time, when they upgraded everyone on their CDN to HTTPS. They went HTTP/2 in a lot of the different DLS optimizations too, which has made things really a lot faster.

Cool. Are there any gotcha or potential landmines if you’re migrating to HTTP/2?

Not really because there are fall backs. If a browser of a user for whatever reason doesn’t support HTTP/2, it’ll just load things like it was HTTP/1.

Alright, cool. That’s definitely a must for our listeners to get that going and get that great search engine journal article about HTTP.

They can just go to Search Engine Land and search Engine Journal.

Okay, so you did a little repurposing.

That’s a big proponent.

Okay, cool. When you migrate to the latest CMS version, let’s say that you’re running WordPress or whatever, you should be doing automated constant upgrades whenever a new release come out, you should be installing it. Otherwise you’re opening yourself up to hackers.

WordPress usually handles that stuff for you. If you’re on them, you’re usually pretty well off.

Let’s say that you’re running an old version of PHP and you’re on the latest WordPress, that’s an optimization opportunity if you upgrade to PHP 7, right?

It is, but there’s still tons of website out there that are on PHP 4, PHP 5.

Let’s talk about server response headers again, because there’s some really cool things that you can do with server response headers and some things that are going to be important for SEO purposes. There are cases for example when you’re going to want to use the Vary header. That’s something most people don’t even know exists as a server response header. Have you used the Vary header before?

Yeah. That’s for dynamic serving websites. Actually, Alec Bertram from DeepCrawl sent me some great research that they did before a presentation I did earlier this year at SMX Advance, when I was talking about the mobile-first index moving to mobile. I think it was something like 96% of the websites that have a dynamically served website don’t use the Vary header correctly.

Wow.

It was an incredible number and really shocking and eye opening.

Wow. Whenever you’re dynamic serving for mobile, meaning that you have a different HTML version for mobile, on the same URL but the HTML is different, it varies. You should be sending a Vary header with a server response headers.

Yeah, absolutely.

Alright. In addition to the Vary header, what are some of the other server response header tricks and tips that you want to share?

There’s Content Security Policy, which we talked a little bit already. Referral Policy is another big one. It’s important for your site to show that it sent traffic elsewhere. I said earlier that you could really keep the referral from an HTTPS to an HTTP site but that’s not necessarily true. Referral policy will actually let you still send that. If you’re a directory, maybe Yellow Pages or something and you need to show that you’re sending traffic to people’s websites, you can still do it that way. The redirects also you mentioned are super important. Jon Henshawactually has a new proposal in or going in soon that I think is really cool, it’ll show what system fired that redirect because it’s getting really complex. Did the CDN fire, did some middleware system which go in the CDN and the server do it, did the server do it, did the CMS do it? Who fired that redirect? Sometimes that’s really difficult to figure out.

Jon Henshaw was at Raven Tools.

[00:39:29] new one.

Yeah. This is a tool that’s like a free plugin or is it a paid tool?

He’s putting in a standard proposal to say that if you do this redirect, you should identify your system, basically.

Got it. Okay, cool. That’s the Referral Policy which is different from referrer. If you’re trying to track a person from one website to another, that’s something you’d show in your analytics.

Google for instance uses our overall policy of origin. You don’t see the specific page on Google, it just shows, in that case, the domain. You’ll see that the traffic came from google.com. They went secure with their search but that’s how you still see everything in your analytics for them.

Yeah. But you don’t see the keywords that were tracked.

No, because the origin ships everything but the domain name, it ships any sub pages or anything like that.

That’s the issue that we all complained about as SEOs, for a long time the not provided going to 100% inside of Google Analytics and whatever analytics package, correct?

Yeah, absolutely. You can still get a lot of that data. I think it slips through people’s mind that you can still get it Google Search Console, I think a lot of people complain about the limit there, but there’s plenty of tools, almost anything you want to use really, that will pull more than the standard data there. Search Analytics for Sheets, that one’s paid. You can do cool things like pull keywords for each page and make a quick pivot table and show that, and even Google Data Studio has an export. You can pull tens of thousands, hundreds of thousands of keywords in one shot and export them.

You can pull tens of thousands, hundreds of thousands of keywords in one shot and export them. Share on XRight. There is a limit inside of Google Search Console when you just login to the tool, which is an amazing free tool. All you listeners need to be signed up with Google Search Console so you get this data. Inside of the Search Analytics reports, you can get a maximum of a thousand keywords or rows and you can only get a maximum of 90 days of data. Anything beyond that time period, you have no visibility into, unless you’re using a tool that’s accessing this Search Analytics data through APIs and getting that data and then storing it over time. I use Rank Ranger which integrates with Google Search Console through the Google API so that it stores all that wonderful data over time inside of Rank Ranger. Also through the API, you can get much more than a thousand rows, you know what the limit is, at least 5000 rows but I don’t recall.

It’s 5000 by default but they have a continuation function, so you can start at row 5001 and pull the next 5000 and do that indefinitely ‘til you have them all.

Wow. That’s nifty, that’s ninja. You’re good at this stuff. Alright, we have Rank Ranger and what was the other tool? The one for sheets?

Search Analytics for Sheets and Google Data Studio also. There’s a lot of them, the story that they actually Ryte from Marcus Tandler does, as well.

Yup, and you’re using that as one of your spidering tools for looking for SEO issues, right?

Yeah, yeah. I personally like their crawler a lot, they also have the nifty TFIDF tool which I think is getting an overhaul soon. Really looking forward to see what they do with their content tool there.

We actually geeked out on that with Marcus, the founder of Ryte, which was called unpage.org previously. That was on an episode of Marketing Speak where we talked about TFIDF and we talked about some of the capabilities of the Ryte tool. Let’s talk about JavaScripts because we briefly talked about it earlier in the episode and you mentioned some different frameworks like React. Some of these frameworks are more search engine friendly or search engine optimal than others. React, which is from Facebook, is actually ironically more search engine friendly than the latest version of Angular which was developed by Google. I find that totally ironic, can you elaborate a bit on these frameworks and SEO?

Yeah. Angular to Angular Universal took a lot of those same stuff that React was doing well. It’s similar but React is probably the most search friendly depending on how it’s done. By default, it’s not really, you can start things like messy URLs, they actually have a really cool thing called React Router though, the basic tool that you set URLs however you want. You can do a lot of the rendering with the framework itself. One of the complaints with single page apps like that is it takes a while on the first load, you’re loading the whole app and then the first page can be slow, after that it does really cool things. It does a dif on the DOM, it can determine what it needs to change only instead of rewriting the whole thing, it’ll say, “I need to change this stuff only.” It’s really fast on page two, page three, etc. There’s a lot of static versions of React, the initial load is I’ll put it as HTTP file. Works really, really well.

Cool. I remember there is a study that somebody did and they compared React and Angular 1, Angular 2 and I forget what else they compared in terms of SEO and how well does data that was being pulled through in asynchronous means would get crawled and indexed. Would links get explored that were inside of AJAX in React or Angular 1 or Angular 2? Do you remember the study?

Was that the one from [00:45:33]?

I think so. It’s a good write up. The bottomline was that Angular 2, he found to be less search engine friendly, harder for Googlebot to access that, the links inside of that and the content and then to index that.

I’m not sure if that’s necessarily true. That might be a difference in the developers. Any of them can be really, really unfriendly or really, really friendly. You have to really look out for things, this is why the DOM is important, you can view source and say hey, there’s not a link there, maybe there is but the DOM will tell you whether it loaded the link or you can monitor and see if it takes click action or something before whatever is loaded, Google’s never going to see that. I say never, not right now anyway. You have to be really careful with that kind of stuff. Any of them can be sort of friendly or sort of unfriendly. Vue is another one that’s becoming really popular right now, Veu.js and they all are okay. I’m still not completely sold, they have their uses. I think WordPress is going to use React actually after their licensing issues were resolved. I think they’re going to use it for the content editor for instance. They’re still not going to switch the whole system over but maybe one day they will.

Yeah, okay. What would be your advice to somebody who’s listening who’s using infinite scrolling. They’re using AJAX, Asynchronous JavaScript and XML, to pull data from their website asynchronously so it’s an infinite scroll sort of situation just like you’re scrolling through your Facebook news feed. What would be your SEO advice for somebody who’s doing that? What’s your best practice suggestions?

Yeah. You’ll probably just want to set page state, separate points and then basically treat the reload as a whole new state. That new state can be the page. Those are a little tricky, and I usually don’t recommend infinite scroll because I’ve rarely seen it done well.

If there’s no data, let’s say the first 20 items aren’t in the HTML, all of it’s being pulled asynchronously, they run a risk of none of that being available to the spider to Googlebot thus not getting indexed.

Yeah, absolutely.

Cool, alright. Were there any other server response header type things or any redirect things that you wanted to share that’s ninja?

I think we covered a lot. There’s a lot of stuff in the headers. Redirects can really, really be tricky. Like I said earlier, finding out where they come from, because they can really come from so many different locations these days. Then there’s the filing order too. We had one, this was a React base system. The redirects in the single page apps are handled themselves, but someone decided that they were going to send a location redirect like an HTTP header redirect, but they left the location blank. Basically Googlebot got redirected into nothingness before it ever solved the JavaScript redirect. You just have to be careful about that stuff, they’re not going see anything at that point.

Yup, alright. One server response header that I think we need to mention is xmeta robots. Because let’s say you have a PDF document that you want no indexed, there’s a big difference between disallowing through robot.txt and no indexing a page or a document and you want it to not show up in the search results, you need to use a no index. Disallow only stops a spider from re-crawling the page and it still shows up in the search results. If we’re dealing with a PDF document we want to drop from the index with a no index, there’s no meta tag that you can add because it’s not HTML but you can use a server response header.

Yeah, absolutely. That’s a good call. Canonical, very similar. You rarely ever see either of those in the head but they can be done and they can cause issues. You’re right, PDFs, that’s where people should use it but the number of times I’ve seen that actually happen is very rare, in fact the Dan Sharp of Screaming Frog actually hijacked Google’s SEO best practice documents, because Google didn’t actually have anything said either. They have a three or two redirect in place for this document and they didn’t have a canonical tag, sad to say, this was the proper location. Just by having the duplicate content, Dan’s was treated as the source of truth and other people have done it before him. It was a running joke for a couple of years because most of the time people’s SEO best practice document wasn’t shown as google.com, it was shown as some other domain.

I cringe whenever I hear about that Google best practice document because there was ridiculous stuff in there advised about H1 tags and stuff. For years and years, it’s been true that H1s have not improved rankings, you could switch all your H1s to a font tag and it’ll have zero impact on your SEO.

Yeah. That’s hard to say for sure but they probably take one in account, like font size and that kind of things these days. That would be an interesting test but I think it would be hard to get any kind of significant results from that test.

I’ve done some testing, I’ve gotten rid of H1 tags. The difference here is when somebody says I added H1 tags and it had an impact on my rankings, they added keyword rich headlines and they happen to warp that in an H1 container while they made multiple changes to the pages by doing that, they increase keyword prominence, they added some keyword rich copy high up in the rendered page with a large font size and they happened to use an h1 container. If they didn’t use an H1 container, they would still have gotten their rankings benefit but they called it an H1 tag benefit but there’s multiple things going on. I think it’s really important to do your SEO tests very strictly, very scientifically, use the scientific method, only one variable at once, have a control group, test your hypothesis and see if it’s real.

Yeah. Sounds like you’ve done the test already. What’s your opinion on multiple H1 tags?

I don’t think multiple H1s really make a difference at all. It’s not like a worse practice or a best practice, it’s just code, it’s like adding a dev tag or something, it doesn’t really do anything. It’s more what’s rendered on the page is that important content as it’s rendered or is it unimportant because if it looks unimportant to the user, it looks unimportant to Google.

Yeah. I’d say that’s absolutely true. HTML5 also allows for multiple H1 tags, with their side classes. There’s a lot of misconception. I would still say have an H1 tag for sure, I wouldn’t worry too much about multiple H1s these days. There’s a lot of things that we do as SEOs that we do just because kind of, it might help, it might not, but we still do them, right?

This is a bug for me, because I’m very outcome focused and most SEOs are activity focused. If it’s supposedly a best practice, they just got to do it, it’s on the checklist. If the box is unchecked, they’re not done with their SEO project and I think that’s a mistake, I don’t think that’s practical, I don’t think that’s in the client’s best interest. If you’re outcome focused, you have a goal, you want to attain that goal, that outcome, and you go after the highest value activities first. If those first three activities get you to your outcome, you’re done, you come up with a new, bigger, more audacious goal to go after and a new set of activities for that. You don’t go through the entire list of every single potential optimization, people are so busy working through meta descriptions for their entire sites and they have a massive website. They’re not even thinking that you know what, meta descriptions are not an optimization ranking signal, they’re not a Google ranking signal and so they’re not going to improve their rankings for these meta description changes. That’s my pragmatic approach.

Yeah. I’m completely with you. We do a lot of things that are time wasters. Meta description is a good one, usually that’s in everyone’s technical SEO audit, I’ll rewrite you meta descriptions, blah, blah, blah. I always say something like prioritize. Maybe your top 10 pages, maybe your top 50 pages can have those rewritten, but I’m still going to prioritize a lot of other stuff before I ever even recommend that.

And you’re also a master at using scripts and tools to automate and scale across those 50 million pages so you don’t have to a lot of manual labor. What would be an example of something that you’ve written that is semi automated or fully automated, maybe you’re dealing with redirects or something?

There’s a lot of different things. Sometimes the stuff isn’t necessarily great, it’s just more for fun to do. I would say the one idea for redirects is a good example of that. I used machine learning and algorithm called [00:55:09] to match previous content with the closest relevant content on the website, basically enough with an order list of page one, page two, page three and how relevant they are. Usually the answer’s in the top three but for business reasons it may not be the actual closest match. We have a lot of times of 20, 30 pages that are about the same thing unfortunately, so we do a lot of content consolidation because of that. The system is cool but if I’m really being practical about it, I would say do a site search with your keyword on the end and find which one of those pages you want to go to. One is going to take you a few hours to run, one is going to take you three seconds. What are you going to do?

Boy, is that a cool thing to have a machine learning algorithm figure out what the closest, most relevant page is to another page. Let’s say you’re going to remove that one page from the site and you’re trying to figure out where you want to redirect that to on a redirect map and you figured it out through machine learning, that’s pretty darn cool. You’re a ninja.

Yeah. One system that was actually useful for that, we had a previous system for detecting duplicate content and it used w-shingling which is [engrams [00:56:27] which are like one, two, three, four, five keyword combinations and they were tokenized and we could compare the content to see how close it was to each other. But we did one with a [00:56:40] also, that looked like sentence level, paragraph level and then the entire document level to do this comparison. We got about 5% better accuracy. It’s an incremental win but it was a win, I would say.

That’s pretty cool. If your eyes are starting to glaze over as a listener because we’re geeking out a bit too much, we’re going to wrap up now so you can start to breathe again. Clearly Patrick, you know your stuff, you’ve been doing SEO for a while and doing some really sophisticated SEO practices. Are you working solely for IBM or do you take on side gigs or can somebody work with you who’s listening to all your ninja advice?

I usually am pretty picky about side projects, my time is fairly limited, but if you have a really interesting problem, really difficult cutting edge legacy crap or just something that you can’t quite figure out, sure.

Alright, awesome. How would somebody reach out to you? Should they go to your website? Where do you want to direct them?

Yeah. I have a website, stoxseo.com, which is being rewritten, don’t judge me. It’s from 2010 right now, hand coded. I’m on Twitter @patrickstox.

Awesome! Thank you, Patrick. This was a lot of fun. I love geeking out and you’re one of the geekiest and very, very skilled SEO. It was a real pleasure. Listeners, now take some action. If this is something that’s over your head but you know from this episode that it’s important stuff, HTTP/2, HTTPS, doing some of the page speed optimization stuff we talked about, etc., ask your developers, ask your systems administrators to start making some changes to your website. We’ll catch you on the next episode of Marketing Speak, this is your host Stephan Spencer signing off.

Important Links

Connect with Patrick Stox

Apps/Tools

Articles

Comapny/Organization

People

Previous Marketing Speak Episode

Your Checklist of Actions to Take

- Migrate my HTTP site to HTTPS or HTTP/2 to make it faster and more secure.

- Research and be more aware of technical SEO terms. This will help me assign necessary tasks to my developer during site creation.

- Hire a web developer who has at least has a beginner SEO background and is capable of doing some primary optimization on my website.

- Never build a website without integrating SEO. It’s like building a house and forgetting to install the wires for electricity, then bring down the walls again to assemble them.

- Look for ways to shortcut my SEO processes without jeopardizing the quality of the results.

- Use hreflang tags on my site if it has multiple languages. This lets Google know which language I am using on specific pages.

- Secure my site with HTTPS to avoid content injection and to prevent companies from posting ads without my consent.

- Set up Google Analytics, sign up for Google Developer Tools, and change my site to HTTPS. This will help me be more informed on my website activity.

- Make everything on my site load faster by migrating to HTTP/2.

- Reach my target audience and improve my online visibility with the help of Ryte.

About the Host

STEPHAN SPENCER

Since coming into his own power and having a life-changing spiritual awakening, Stephan is on a mission. He is devoted to curiosity, reason, wonder, and most importantly, a connection with God and the unseen world. He has one agenda: revealing light in everything he does. A self-proclaimed geek who went on to pioneer the world of SEO and make a name for himself in the top echelons of marketing circles, Stephan’s journey has taken him from one of career ambition to soul searching and spiritual awakening.

Stephan has created and sold businesses, gone on spiritual quests, and explored the world with Tony Robbins as a part of Tony’s “Platinum Partnership.” He went through a radical personal transformation – from an introverted outlier to a leader in business and personal development.

About the Guest

PATRICK STOX

Patrick Stox is a Technical SEO for IBM. He writes for many search blogs and speaks at many of the conferences. He’s an organizer of the Raleigh SEO Meetup (the most successful in the US) and was founder of the Technical SEO Slack group.

Leave a Reply